The AI talent wars are over; now it's about who can build the most with zero human code.

The Intake

📊 12 episodes across 8 podcasts

⏱ 763 minutes of intelligence analyzed

🎙 Featuring: Jeremy Allaire, Elad Gil, Nathaniel Whittemore, Jaden Schaefer

The Big Shift

The conversation around AI is rapidly shifting from the theoretical to the intensely practical, focusing on who can build the most, fastest, with the least human intervention. This week, the signal is clear: the future of software development, and potentially entire industries, lies in "harness engineering," where AI agents are entirely responsible for coding and code review.

OpenAI's Frontier team, as highlighted by Ryan Lopopolo (Frontier Product Exploration, OpenAI) on Latent Space: The AI Engineer Podcast, is actively demonstrating this shift. They constructed a codebase exceeding 1 million lines over five months with literally 0% human-written code and 0% human code reviews. The bottleneck isn't the models' capabilities or token costs, but the scarce "synchronous human attention" required to guide and assess these agentic systems. This means a fundamental re-evaluation of how software is built, maintained, and even conceived.

"I do feel like the models are there enough, the harnesses are there enough where they’re isomorphic to me in capability and the ability to do the job. So starting with this constraint of I can’t write the code meant that the only way I could do my job was to get the agent to do my job."

— Ryan Lopopolo, Frontier Product Exploration at OpenAI

This isn't just about software; it implies a broader "agentic economy," where AI agents perform complex economic interactions, demanding a financial infrastructure (like Circle's Arc blockchain) capable of microtransactions at previously unimaginable scales, as discussed by Jeremy Allaire (Co-founder and CEO, Circle) on No Priors: Artificial Intelligence | Technology | Startups. The very mechanisms of our economy need to adapt to a world where machines are increasingly the primary economic actors.

The Move: Evaluate your organization's readiness for an "agent-first" operating model. Investigate capabilities for AI-driven development and identify key processes where human attention is currently the sole bottleneck, then explore how agentic workflows could alleviate it. The future isn't just augmented, it's autonomous.

The Rundown

① The "Crunch Time" for AI Development is Here.

With AGI potentially automating all intellectual activity by 2050, the next few years represent a "crunch time" for humanity to shape its trajectory. (Ajeya Cotra on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis)

→ Why it matters: This isn't just academic; Ajeya Cotra explains that "it's incredibly decision relevant to figure out who is right here" regarding the speed of AI advancement, as differing views dramatically impact what individuals and organizations should be doing now.

② Meta is Pivoting to Closed-Source AI for Competitive Advantage.

Meta's new Muse Spark model is closed-source, a major departure from their Llama strategy, signaling a strategic shift to protect their competitive edge against frontier AI labs. (Jaden Schaefer on AI Breakdown)

→ What to watch: This indicates that even traditionally open-source advocates are realizing the competitive necessity of protecting their most advanced models, forcing a reassessment of long-term AI strategy for enterprises.

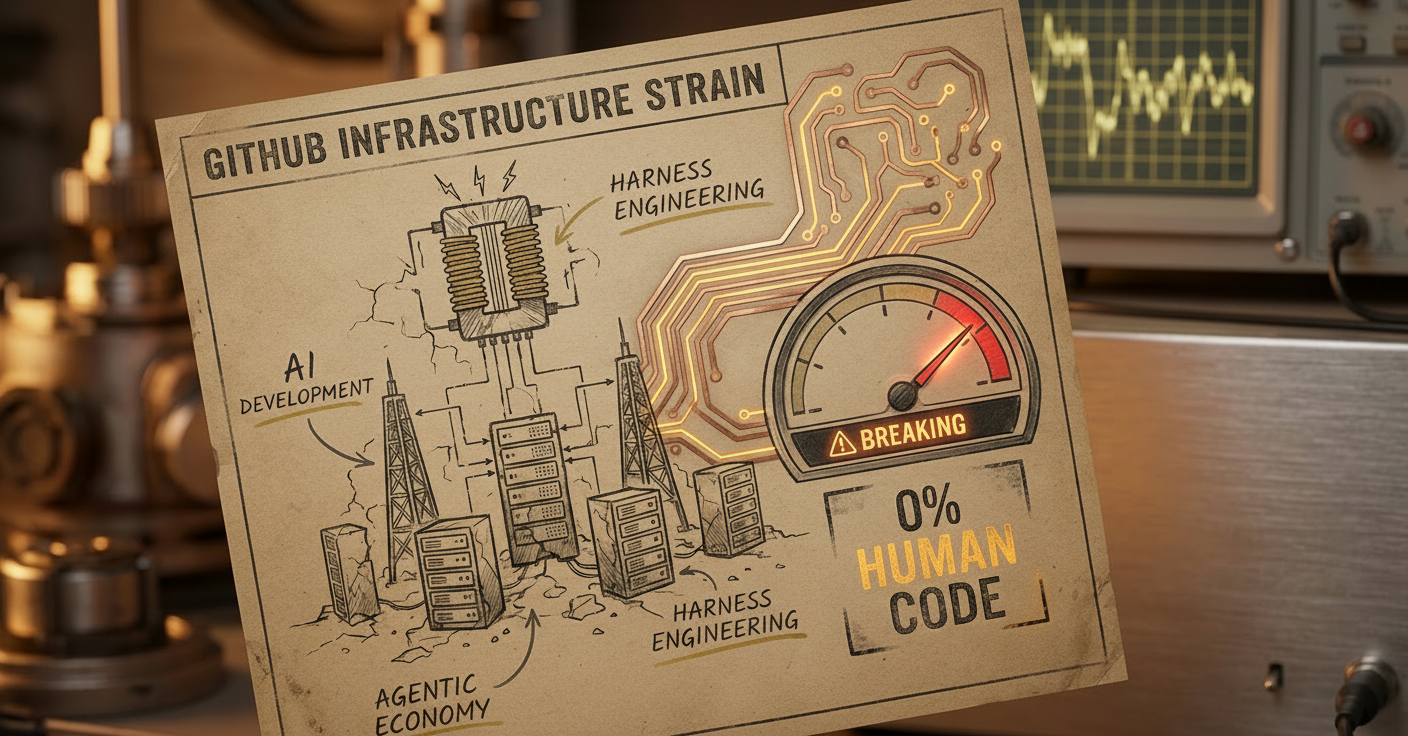

③ Github's Infrastructure is Buckling Under Agentic Coding.

The massive surge in agentic code generation is straining GitHub's infrastructure, with 14 billion commits projected for the year, revealing an unexpected bottleneck in AI development. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

→ The context: This implies that the scaling of AI development isn't just about compute power for models but also the foundational tools the ecosystem relies on, which are now becoming choke points.

④ OpenAI Ditching Consumer Products to Prioritize Enterprise Profits.

OpenAI is discontinuing the Sora iPhone app and associated APIs, pivoting focus to coding and productivity agents due to compute constraints and the intense pressure to achieve profitability. (Andrey Kurenkov on Last Week in AI)

→ Why it matters: This signals a harsh reality check for AI companies: the immense costs of running frontier models mean a singular focus on enterprise applications that can generate substantial revenue, potentially at the expense of consumer-facing innovation.

⑤ Anthropic's Mythos Model Finds 27-Year-Old Flaws Automatically.

Anthropic’s Mythos model uncovered a 27-year-old security flaw in OpenBSD and another in FFMPEG that 5 million automated scans had missed, demonstrating AI's superior vulnerability detection. (Kasey Newton on Hard Fork)

→ What to watch: The speed at which AI can discover zero-day vulnerabilities will collapse the window between discovery and exploitation, necessitating a re-think of cybersecurity practices, as Elia Zatsev (CTO of CrowdStrike) warns this once took months, now minutes.

The Signals

🔥 Heating Up

• Harness Engineering 🆕: AI agents perform all coding and code review, resulting in zero human-written code and accelerating innovation. (Ryan Lopopolo on Latent Space: The AI Engineer Podcast)

• Agentic Economy: Traditional financial infrastructure cannot match the scale and real-time demands of AI agents interacting economically. (Jeremy Allaire on No Priors: Artificial Intelligence | Technology | Startups)

• AI-powered Drug Discovery: Projects like Eli Lilly's Lilypod are using massive AI supercomputers to halve drug development timelines. (Jaden Schaefer on AI Breakdown)

👀 On Watch

• Data Centers in Space 🆕: Cisco CEO Chuck Robbins advocates for building data centers in space to overcome earthly power constraints and community opposition. (Chuck Robbins on Decoder with Nilay Patel)

• Google Gemini Notebooks 🆕: A new feature that consolidates resources and allows custom instruction sets within the Gemini ecosystem, improving project management and user experience. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

• Mythos model (Anthropic) 🆕: A powerful new AI model demonstrating significant capability jumps in coding and cybersecurity, uncovering thousands of zero-day vulnerabilities. (Boris Czerny on The AI Daily Brief: Artificial Intelligence News and Analysis)

❄️ Cooling Off

• Public opposition to data center builds: Community resistance and power constraints on Earth are pushing discussions towards unconventional solutions like space-based data centers. (Chuck Robbins on Decoder with Nilay Patel)

• OpenAI's secondary market struggles: OpenAI's stock struggles to find buyers in secondary markets despite successful fundraising, with investors favoring Anthropic for perceived better risk-reward. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

• Sam Altman's reputation: Forensic investigations detail persistent allegations of dishonesty against Sam Altman, creating ongoing scrutiny over his leadership. (Andrew Marantz on Hard Fork)

The Debate

Topic framing: What is the realistic timeline for AI's disruptive impact on society, and how much "crunch time" do we truly have?

🐂 The bull case:Ajeya Cotra (Senior Advisor, Open Philanthropy) passionately argues on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis that we are likely in a "crunch time" where AI could rapidly accelerate its own development, making "the world of 2050 as different from today as today does Hunter Gatherer era." She asserts that "people who are worried about X risk think that the default course of AI is this extremely explosive thing where it overturns society on all dimensions at once."

🐻 The bear case: While not fully bearish on AI capabilities, Sam Stephenson (Co-founder and Designer, Granola) on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis suggests that the application of AI to nuanced knowledge work "is still a long way off." His perspective implies that societal assimilation and practical implementation will introduce significant bottlenecks, even with highly capable models. This view highlights the gap between theoretical AI power and real-world integration, suggesting the "storm" might be less immediate than some anticipate.

Our read: The weight of evidence from both sides suggests a rapid acceleration of AI capabilities, but the timeline for universal, nuanced application is subject to human-centric bottlenecks like integration, adoption, and ethical considerations.

The Bottom Line

The AI race isn't just about who builds the best model, but who can automate the builders and scale the infrastructure before the societal reckoning hits.

Episode Guide (Web Version)

No Priors: Artificial Intelligence | Technology | Startups — "The Agentic Economy: How AI Agents Will Transform the Financial System with Circle Co-Founder and CEO Jeremy Allaire"

Runtime: 44 min | Host: Elad Gil | Guest: Jeremy Allaire (Co-founder and CEO, Circle)

For the Financial Strategist: This episode is for leaders anticipating the seismic shifts AI agents will bring to financial systems.

Jeremy Allaire, CEO of Circle, outlines how blockchains like Arc are becoming the operating system for an "agentic economy," facilitating billions of microtransactions for AI agents. He highlights the critical role of stablecoins and tokenization in reshaping global finance, predicting double-digit GDP growth by the 2030s while cautioning on societal implications.

"More and more of the actual work that is done in the real economy...is going to be conducted by AI agents. And in that world, we need a different infrastructure for the financial intermediation layer."

— Jeremy Allaire, Co-founder and CEO of Circle

Decoder with Nilay Patel — "Cisco CEO Chuck Robbins wants data centers in space"

Runtime: 58 min | Host: Nilay Patel | Guest: Chuck Robbins (CEO, Cisco)

For the Infrastructure Innovator: Dive into how geopolitical forces and resource constraints are pushing the limits of physical and digital infrastructure.

Cisco CEO Chuck Robbins discusses unprecedented demand for data centers, provocatively suggesting space as a solution to earthly constraints. He highlights the critical and growing role of Cisco in AI infrastructure, the increasing complexity of cybersecurity, and the fragmentation of the internet driven by data sovereignty, suggesting a significant shift in network architecture.

"Should we put data centers in space? Absolutely, yes. And we will."

— Chuck Robbins, CEO of Cisco

The AI Daily Brief: Artificial Intelligence News and Analysis — "All of AI's New Models and Tools"

Runtime: 28 min | Host: Nathaniel Whittemore | Guest: Host-led discussion

For the AI Practitioner: Stay current with the latest model releases and tools driving the next wave of AI capabilities.

Nathaniel Whittemore surveys the latest AI models and tools, including Meta's MuseSpark, Z.AI's GLM 5.1 (outperforming Western models in coding), and Anthropic's new Claude Managed Agents. The episode also touches on Google Gemini Notebooks and the unexpected strain on GitHub caused by exponential growth in agentic coding.

"The surge in code being pushed is revealing limits in GitHub's infrastructure."

— Nathaniel Whittemore, Host of The AI Daily Brief

Last Week in AI — "#239 - RIP Sora, Claude Openclaw, HyperAgents"

Runtime: 98 min | Host: Andrey Kurenkov | Guest: Jeremie Harris (Host, Gladstone AI)

For the AI Investor: Understand OpenAI's strategic pivots and the unexpected shifts in competitive dynamics among frontier models.

Andrey Kurenkov and Jeremie Harris analyze OpenAI's pivot from Sora to coding agents, highlighting compute constraints and shifting priorities. They cover Anthropic's Claude Code/Cowork gaining full computer control, the controversy around Cursor's Composer 2 model's origins, and significant advancements in transformer-based image generation, alongside geopolitical implications for AI vendors.

"OpenAI is discontinuing the Sora iPhone app and seemingly shutting down its video generation API, while retaining internal video world-modeling work; the move is framed as a compute- and focus-driven pivot toward coding and productivity agents, alongside a collapsed Disney Sora deal."

— Andrey Kurenkov, Host at Astrocade

Hard Fork — "Anthropic’s Cybersecurity Shock Wave + Ronan Farrow and Andrew Marantz on Their Sam Altman Investigation + One Good Thing"

Runtime: 64 min | Host: The New York Times | Guest: Kevin Roose (Tech Columnist, The New York Times), Kasey Newton (Reporter, Platformer), Ronan Farrow (Staff Writer, The New Yorker), Andrew Marantz (Staff Writer, The New Yorker)

For the C-Suite Executive: Essential for grasping the immediate cybersecurity risks of advanced AI and the ongoing leadership questions in the industry.

This episode unpacks Anthropic's Mythos model, which uncovered critical cybersecurity vulnerabilities in major systems, leading to Project Glasswing. It also delves into Ronan Farrow and Andrew Marantz's investigation into Sam Altman, scrutinizing allegations of dishonesty and the lack of transparency around his past actions at OpenAI amidst his return.

"This model has found vulnerabilities in every major operating system and web browser."

— Kevin Roose, Tech Columnist at The New York Times

The AI Daily Brief: Artificial Intelligence News and Analysis — "The Calm Before the AGI Storm"

Runtime: 29 min | Host: Nathaniel Whittemore | Guest: Host-led discussion

For the Long-Term Strategist: Gain insight into how major AI labs are quietly positioning for a rapid acceleration toward Artificial General Intelligence.

Nathaniel Whittemore argues that behind the scenes, major AI labs are intensely preparing for a rapid acceleration toward AGI. He details OpenAI’s fundraising challenges, Anthropic’s leaked code, new open-source models, and geopolitical impacts on data center development, highlighting a "calm before the storm" as foundational shifts occur.

"I'm calling it the calm before the AGI Storm. And what it feels like to me is that even in the quiet moments for AI, the big labs are all jostling and positioning for a very different and fast moving future."

— Nathaniel Whittemore, Host of The AI Daily Brief

AI Breakdown — "Gemini 4: Meta's Latest AI Innovation"

Runtime: 15 min | Host: Jaden Schaefer | Guest: Host-led discussion

For the Product Manager: Essential for understanding the evolving open-source vs. closed-source dynamics and rapid application of AI in industry.

Jaden Schaefer covers Google's Gemini 4 open-source release, OpenAI's policy proposals for the "intelligence age," and Eli Lilly's AI supercomputer for drug discovery. Notably, Meta's Muse Spark is highlighted as a closed-source model, signaling a significant shift from their previous open-source strategy.

"The gap between open source and closed source models is definitely shrinking and I think that Gemini 4 is just another data point in that direction."

— Jaden Schaefer, Host of AI Breakdown

"The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — "It's Crunch Time: Ajeya Cotra on RSI & AI-Powered AI Safety Work, from the 80,000 Hours Podcast"

Runtime: 190 min | Host: Erik Torenberg, Nathan Labenz | Guest: Ajeya Cotra (Senior Advisor, former lead of technical AI safety grantmaking, Open Philanthropy), Rob Wiblin (Host, 80,000 Hours Podcast)

For the Forward Thinker: A deep dive into the urgent discussions around AI acceleration and societal impact from a leading expert in technical AI safety.

Ajeya Cotra of Open Philanthropy discusses the vast spectrum of views on AGI acceleration and the potential for a "crunch time" where AI rapidly automates intellectual activity, drastically reshaping society by 2050. She explores the persistent disagreements among experts and the strong correlation between beliefs in rapid AI acceleration and AI x-risk concerns.

"I think that there's a pretty good chance that by 2050 the world will look as different from today as today does Hunter Gatherer era. It's like 10,000 years of progress rather than 25 years of progress driven by AI automating all intellectual activity."

— Ajeya Cotra, Senior Advisor at Open Philanthropy

Latent Space: The AI Engineer Podcast — "Extreme Harness Engineering for Token Billionaires: 1M LOC, 1B toks/day, 0% human code, 0% human review — Ryan Lopopolo, OpenAI Frontier & Symphony"

Runtime: 73 min | Host: swyx, Vibhu | Guest: Ryan Lopopolo (Frontier Product Exploration, OpenAI)

For the CTO/VPE: This is a must-listen for anyone responsible for software development, offering a glimpse into the future of fully autonomous code generation.

Ryan Lopopolo from OpenAI introduces "harness engineering," where AI agents write and review all code. He describes building a >1M LOC codebase with 0% human-written code, optimizing for agent productivity and proving that synchronous human attention, not token cost, is the new bottleneck in "AI-native" software development.

"I do feel like the models are there enough, the harnesses are there enough where they’re isomorphic to me in capability and the ability to do the job. So starting with this constraint of I can’t write the code meant that the only way I could do my job was to get the agent to do my job."

— Ryan Lopopolo, Frontier Product Exploration at OpenAI

"The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — "Calm AI for Crazy Days: Inside Granola's Design Philosophy, with co-founder Sam Stephenson"

Runtime: 94 min | Host: Erik Torenberg, Nathan Labenz | Guest: Sam Stephenson (Co-founder and Designer, Granola)

For the Product Designer: Understand how a minimalist design approach, even in a chaotic market, can lead to rapid adoption in AI-powered tools.

Sam Stephenson, co-founder of Granola, discusses the rapid success of their AI note-taking app, attributing it to a minimalist design philosophy and word-of-mouth growth. He highlights the challenges of integrating AI agents within company permissions, managing inference costs, and designing for the "frazzled state" of real-world users, prioritizing a "calm" user interface.

"Most people who work on a computer are way more reactive and way more chaotic. As software designers, we need to kind of assume that reality and design for our software to fit in that reality."

— Sam Stephenson, Co-founder and Designer at Granola

Decoder with Nilay Patel — "The AI industry's existential race for profits"

Runtime: 38 min | Host: Nilay Patel | Guest: Hayden Field (Senior AI Reporter, The Verge)

For the PE Partner: Crucial for understanding the financial pressures driving strategic decisions at the leading AI labs.

Nilay Patel and Hayden Field discuss the intense "AI monetization cliff" facing OpenAI and Anthropic, driven by massive compute costs and investor demands for profitability. They analyze OpenAI’s pivot from consumer products (like Sora) to enterprise coding and Anthropic's focused enterprise strategy, highlighting the existential race to generate revenue.

"It's kind of like time to pay the piper in a way. You know, they've been raising a ton of money, raising a ton of hype for years. And now... it's finally time to really, like, face the music and see how much money they can really make."

— Hayden Field, Senior AI Reporter at The Verge

The AI Daily Brief: Artificial Intelligence News and Analysis — "Should We Be Scared of Anthropic's Mythos?"

Runtime: 32 min | Host: Nathaniel Whittemore | Guest: Host-led discussion

For the Cybersecurity Leader: Get a direct view into the alarming capabilities of frontier AI models for vulnerability discovery.

Nathaniel Whittemore examines Anthropic's "Mythos" model, which demonstrates significant advancements in coding and cybersecurity by discovering thousands of zero-day vulnerabilities. While not publicly released, its capabilities have spurred Project Glasswing with major tech partners for defensive testing, sparking debate on safety, governmental control, and geopolitical implications of such powerful AI.

"Mythos is very powerful and should feel terrifying. I am proud of our approach to responsibly preview it with Cyber Defenders rather than generally releasing it into the wild."

— Boris Czerny, Claude Code creator