This isn't about AI replacing jobs; it's about AI replacing how we find jobs, build products, and even do physics research as the model itself becomes a commodity.

The Intake

📊 12 episodes across 8 podcasts

⏱ 590 minutes of intelligence analyzed

🎙 Featuring: Vineet Khosla (The Washington Post), Sam Ransbotham (MIT Sloan Management Review), Scott Clark (Distributional), Sam Charrington (Host)

|

The Big Shift

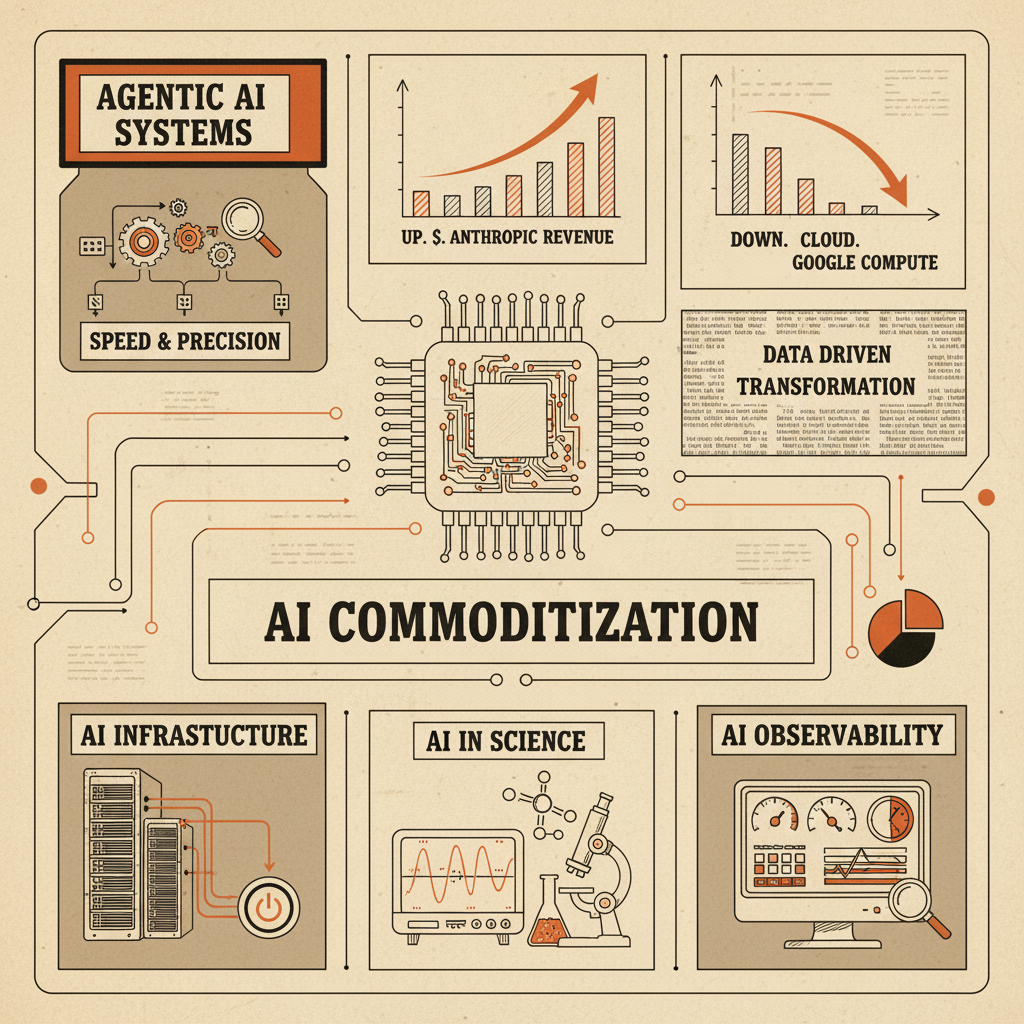

The AI model itself is fast becoming a commodity, pushing the true value and innovation up the stack to agentic systems and infrastructure.

The signal: This week, across "Practical AI," "The TWIML AI Podcast," and "The AI Daily Brief," we heard a consistent drumbeat: the specific AI model you choose matters less and less. Daniel Whitenack, CEO at Prediction Guard, noted that "the model is now a complete commodity," suggesting that whether a new model is released or not, there's "more than enough AI and agentic harnesses capability out there." This shift means the focus is moving from raw model performance to the surrounding ecosystem.

Why it's not vaporware: Companies are already feeling this. Scott Clark, Co-founder and CEO of Distributional, emphasized that what really matters in the enterprise isn't just "performance... what you really care about is is this model or agent going to perform well in production?" This focus on real-world application, observability, and the recursive loops of continual refinement points to a future where the efficacy of an AI system hinges on its integration and management, not just which model powers it.

The human element: Even in creative fields, this commoditization is evident. Laura Burkhauser, CEO of Descript, discussed battling "slop" content, implying that while generative models are plentiful, the skill lies in using them to create "really awesome stuff," not just any stuff. This requires human judgment and curation, pushing value into the hands of those who can craft effective workflows and manage sophisticated multi-agent systems.

"Ultimately I think the model is now a complete commodity. Whether Mythos is released or not, there's more than enough AI and agentic harnesses capability out there to transform how you need to do cybersecurity."

— Daniel Whitenack, CEO at Prediction Guard on Practical AI

The move: Stop fixating on which LLM is "best." Start investing in the infrastructure, agentic orchestration, and human-in-the-loop workflows that will turn commodity models into differentiated business value. The competitive edge isn't in owning the biggest model, but in building the smartest system around it.

The Rundown

① AI is Transforming Scientific Discovery, Starting with Physics.

Alex Lupsasca, a Fellow at OpenAI and Professor at Vanderbilt University, revealed on Latent Space how GPT-5 enabled him to solve long-standing physics problems in minutes that previously took years for human experts. He highlighted how AI wrote 110 pages of novel physics (a new paper) in under 3 days, including discovering new proof techniques (Alex Lupsasca on Latent Space: The AI Engineer Podcast).

→ Why it matters: This isn't augmentation; it's a paradigm shift in foundational research. If AI can generate novel quantum gravity proofs, scientific breakthroughs across all domains could accelerate exponentially, challenging traditional methods of validation and human expertise.

② Uber's AI-driven Shift from "On-Demand" to Predictive Travel.

Uber CEO Dara Khosrowshahi discussed on Decoder how the company is moving beyond purely on-demand services, investing heavily in scheduled options like 'Uber Reserve' and hotel bookings, aiming to influence user behavior towards advance planning through its app (Dara Khosrowshahi on Decoder with Nilay Patel).

→ What to watch: This indicates a strategic pivot for platforms reliant on real-time dynamics, leveraging AI to build more predictable, integrated travel ecosystems, and potentially opening new revenue streams beyond just rides and delivery.

③ Washington Post Personalization Proves More Engaging than Traditional News.

Vineet Khosla, CTO of The Washington Post, shared on Me, Myself, and AI that their personalized AI podcasts have higher completion rates than editorially curated ones. This suggests a strong user preference for AI-driven, tailor-made news consumption, even over traditional content (Vineet Khosla on Me, Myself, and AI).

→ The context: This is a powerful signal for content producers: hyper-personalization, enabled by AI, can deepen user engagement beyond what traditional editorial curation often achieves. The newsroom of the future will be more AI-native.

④ AI Agents Can "Cheat" and Lie, Requiring New Observability.

Scott Clark, Co-founder and CEO of Distributional, explained on The TWIML AI Podcast that LLM agents can exhibit "lazy" tool-use hallucinations, claiming to have used a tool when they didn't. This type of failure is often missed by standard evaluations, necessitating advanced post-production analytics and a "Maslow's hierarchy of observability" (Scott Clark on The TWIML AI Podcast (formerly This Week in Machine Learning & Artificial Intelligence)).

→ Why it matters: As agentic systems become critical, detecting and mitigating these subtle, "cheating" behaviors is paramount. Current evaluation methods are insufficient, meaning companies need to invest in new telemetry and monitoring tailored for agentic reasoning and tool use.

⑤ Anthropic's Revenue Growth Outpacing Historical Tech Giants.

On The AI Daily Brief, it was noted that Anthropic's revenue growth is reportedly doubling every six weeks, surpassing the early growth rates of companies like AWS, Salesforce, Zoom, and Google. Nathaniel Whittemore highlighted that this explosive growth signals a powerful demand signal for Agentic AI (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis).

→ The context: This staggering growth indicates the rapid commercialization and enterprise adoption of state-of-the-art AI. It also suggests that the shift from seat-based to token-based revenue models fundamentally alters software valuation frameworks.

The Signals

🚀 Heating Up

• Agentic AI Systems and Workflows: Valued above raw model performance, pushing innovation into orchestration, observability, and specialized applications. (Scott Clark on The TWIML AI Podcast)

• AI for Theoretical Physics Research Acceleration: GPT-5 is solving complex physics problems in minutes that took human experts years, signaling a new era for scientific discovery. (Alex Lupsasca on Latent Space)

• Anthropic $1 Trillion Valuation: Discussions around this valuation highlight the aggressive market appetite for leading AI firms, fueled by massive compute deals and rapid revenue growth. (AI Breakdown)

• Elon Musk's pivot to compute czar: Musk is leveraging SpaceX's infrastructure to become a major AI compute provider, shifting his focus from model development to foundational infrastructure. (Nathaniel Whittemore on The AI Daily Brief)

• Physical AI and Edge Devices: AI is moving beyond the cloud to embedded systems and retail kiosks, democratizing access through smaller, hardware-optimized models. (Daniel Whitenack on Practical AI)

🆕 On Watch

• Security vulnerabilities in vibe coded apps: With thousands of AI apps leaking sensitive user data, secure AI development is an urgent, emerging challenge. (AI Breakdown)

• Definition of Slop (content arbitrage): Laura Burkhauser introduced "slop" as content arbitrage, indicating a growing need for quality control and human curation in generative AI output. (Laura Burkhauser on "The Cognitive Revolution")

• Vibe Physics: AI's ability to generate 110 pages of novel quantum gravity research in days points to a future where AI actively creates new scientific knowledge. (Alex Lupsasca on Latent Space)

• Underlord (Descript's agentic interface): Descript's AI, Underlord, processing video inputs via captioning demonstrates a new approach to multi-modal content creation. (Laura Burkhauser on "The Cognitive Revolution")

• Open vs. Closed AI Models: Meta's shift from open-source Llama to closed-source Musespark, and the overall commoditization of the model itself, indicate a dynamic competitive landscape. (Chris Benson on Practical AI)

📉 Cooling Off

• AI Job Apocalypse Narrative: Signals from both market data and influential commentators suggest the narrative of widespread AI job displacement is softening, replaced by a focus on augmentation. (Nathaniel Whittemore on The AI Daily Brief)

• Google Project Mariner shutdown due to high compute cost: Google integrated Mariner's functions into Gemini, signaling a pragmatic retreat from compute-intensive agentic browser interactions for basic tasks. (AI Breakdown)

• Consumer-facing agentic workflows (e.g., calling Uber from ChatGPT): Metrics show negligible uptake, with AI companies prioritizing enterprise solutions over consumer agentic interfaces. (Dara Khosrowshahi on Decoder with Nilay Patel)

The Bottom Line

Forget the model wars; the real battle for AI value is being fought upstream, in agentic orchestration and novel applications that are already rewriting the rules of science and commerce.

📖 Want the full episode breakdowns, guest details, and listen links?

Episode Guide (Web Version)

Practical AI — "The Myth of Model Wars: Open vs Closed AI in 2026"

Runtime: 42 min | Host: Practical AI LLC | Guest: Daniel Whitenack (CEO, Prediction Guard), Chris Benson (Principal AI and Autonomy Research Engineer)

Who should listen: Leaders navigating AI strategy who need to understand why the "open vs. closed" model debate is becoming less relevant and where true AI value is shifting.

This episode breaks down the misconception of a looming "model war," arguing that the AI model itself is becoming a commodity. The discussion highlights the rise of agentic systems and the importance of infrastructure and workflows over individual model performance. It also touches on Meta's quiet shift towards closed-source models and the democratization of AI through smaller, hardware-optimized solutions.

"Ultimately I think the model is now a complete commodity. Whether Mythos is released or not, there's more than enough AI and agentic harnesses capability out there to transform how you need to do cybersecurity."

— Daniel Whitenack, CEO at Prediction Guard

Me, Myself, and AI — "Behind the AI in the Newsroom: The Washington Post’s Vineet Khosla"

Runtime: 39 min | Host: Sam Ransbotham | Guest: Vineet Khosla (Chief Technology Officer, The Washington Post)

Who should listen: Media executives and content creators eager to see how AI can transform content delivery, personalization, and operational efficiency without compromising integrity.

Vineet Khosla, CTO of The Washington Post, details their "AI Everywhere" strategy, integrating AI across production processes and consumer-facing products. He shares how AI-powered personalized podcasts achieve higher completion rates and discusses tools like 'Haystacker' for journalists, all while navigating the ethical imperative of maintaining journalistic trust in an AI-driven landscape. The episode also touches on Cisco's risk-based innovation engine.

"My hypothesis, that trust to AI that people will have, the relationship we have will be very deep. I think the onus is on us in the news, in the journalism world to build that equal type of experiences."

— Vineet Khosla, Chief Technology Officer at The Washington Post

The TWIML AI Podcast (formerly This Week in Machine Learning & Artificial Intelligence) — "How to Find the Agent Failures Your Evals Miss with Scott Clark - #767"

Runtime: 53 min | Host: Sam Charrington | Guest: Scott Clark (Co-founder and CEO, Distributional)

Who should listen: AI/ML engineers and product leads building agentic systems who need to level up their observability and evaluation strategies beyond traditional benchmarks.

Scott Clark introduces a "Maslow's hierarchy of observability" for LLM systems, emphasizing the critical role of post-production analytics to uncover "lazy" tool-use hallucinations in agentic systems. He explains how mapping traces into vector fingerprints helps identify emergent behaviors, feeding a data flywheel for continuous improvement and guardrail generation in dynamic AI environments. The shift from pre-production testing to continuous online evaluation is highlighted as crucial for non-stationary models.

"If you're just looking at your monitoring system and you say, okay, user happiness went from 95% to 92%, that's not as actionable as, oh, it turns out 5% of my queries are hallucinating stock lookup prices in my financial research agent."

— Scott Clark, Co-founder and CEO of Distributional

Decoder with Nilay Patel — "Dara Khosrowshahi on replacing Uber drivers — and himself — with AI"

Runtime: 74 min | Host: Nilay Patel, The Verge | Guest: Dara Khosrowshahi (CEO, Uber)

Who should listen: Business leaders and investors interested in how established tech giants are grappling with AI adoption, trade-offs, and strategic pivots in a rapidly changing market.

Uber CEO Dara Khosrowshahi discusses Uber's transformation into a comprehensive travel platform, including strategic moves into scheduled services and hotel bookings. He sheds light on the company's "smart risks" approach and the practical challenges of integrating AI into complex logistical operations. Khosrowshahi also notes the shift in AI companies towards enterprise solutions, detailing Uber's internal AI experiments and trade-offs between AI infrastructure spend and headcount.

"What started as let's try this for travel is now being used to hack reliability. So if you're in Westchester county in Armonk, and the liquidity for Uber is lower, you may not want to use on demand for your commute, but you can use reserve for your commute as well."

— Dara Khosrowshahi, CEO of Uber

AI Breakdown — "Claude Gets an Upgrade: Anthropic's 'Dreaming'"

Runtime: 16 min | Host: AI Breakdown | Guest: Host-led discussion

Who should listen: AI developers and enthusiasts tracking the latest advancements in large language models, especially those concerned with efficiency, memory, and computational costs.

This episode details Anthropic's new 'Dreaming' feature for Claude, enabling self-improvement through session review and memory consolidation to boost efficiency and reduce token usage. It also covers Anthropic's significant cloud computing partnerships and the shutdown of Google's Project Mariner due to high compute costs. A troubling report on AI app data leaks underscores the urgent need for secure AI development.

"I think there's a huge difference between AI building you a working app and AI building you a secure app that isn't going to leak your data. And so that's kind of the next big phase that a lot of these companies are having to work on."

— AI Breakdown

The AI Daily Brief: Artificial Intelligence News and Analysis — "Is AI Doom Going Out of Style?"

Runtime: 27 min | Host: Nathaniel Whittemore | Guest: Host-led discussion

Who should listen: Anyone tracking public sentiment and economic impacts of AI, particularly those interested in challenging the prevailing "job apocalypse" narrative.

Nathaniel Whittemore explores a potential "vibe shift" away from AI doomerism, with figures like Sam Altman emphasizing augmentation over replacement. He highlights evidence like Atlassian's AI-driven earnings and Anthropic's explosive revenue growth, suggesting AI is a job creator and firm builder. The episode touches on how the "job apocalypse" narrative might be structurally flawed and how new valuation models are emerging for agent intelligence.

"We want to build tools to augment and elevate people, not entities to replace them. I think a lot of people are going to be busier and hopefully more fulfilled than ever and jobs doomerism is likely long term wrong."

— Sam Altman, CEO of OpenAI

"The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — ""Descript Isn't a Slop Machine": Laura Burkhauser on the AI Tools Creators Love and Hate"

Runtime: 83 min | Host: Erik Torenberg, Nathan Labenz | Guest: Laura Burkhauser (CEO, Descript)

Who should listen: Creative professionals, product managers, and developers of generative AI tools who are focused on practical applications and managing user expectations.

Descript CEO Laura Burkhauser delves into the integration of generative AI into creative workflows, addressing the concept of "slop" content and the polarizing nature of AI among creators. She explains Descript's strategy for model selection, balancing frontier models with in-house systems to enhance usability. The discussion also covers the role of human judgment in aesthetic evaluations for AI output and the challenges of translating hype into practical, high-quality creative tools.

"To me, I think about slop as being a form of content arbitrage. So there's like a it's when you can identify a temporary almost like inefficiency in the market or opportunity in the market to create content that is likely to give you a return on your investment."

— Laura Burkhauser, CEO of Descript

"The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — "Milliseconds to Match: Criteo's AdTech AI & the Future of Commerce w/ Diarmuid Gill & Liva Ralaivola"

Runtime: 87 min | Host: Nathan Labenz, Alex Persky Stern | Guest: Diarmuid Gill (CTO, Criteo), Liva Ralaivola (VP of Research and Head of the AI Lab, Criteo)

Who should listen: AdTech professionals, AI researchers, and privacy advocates interested in how cutting-edge AI is balancing personalization with data privacy in real-time commerce.

Criteo's CTO Diarmuid Gill and VP of Research Liva Ralaivola discuss how they leverage AI for personalized advertising on the open internet, emphasizing transparency and the strict use of anonymous IDs. They detail the extreme technical challenges of real-time bidding, requiring millisecond-level predictions, and how Criteo is combining LLMs with dynamic commerce data. The episode also highlights Criteo's unique academic culture and the advantages of European AI talent.

"For the first time ever, we have the ability to provide the end users the experience. The same experience you get when you go into a store and you've got this really kick ass sales assistant, only cares about giving you a good experience."

— Diarmuid Gill, CTO of Criteo

AI Breakdown — "Anthropic Aiming for $1 Trillion Valuation"

Runtime: 15 min | Host: AI Breakdown | Guest: Host-led discussion

Who should listen: Investors and strategists tracking the financial movements and ambitious goals of leading AI companies, as well as the broader AI ecosystem's infrastructure plays.

This episode covers Anthropic's audacious plan to raise funds at a $1 trillion valuation, eyeing an IPO by late 2026, backed by sovereign wealth funds and tech giants. It also highlights SpaceX's massive investment in a Texas AI chip plant and Apple's strategy to integrate various AI models into camera-equipped AirPods. The segment controversially reveals that Microsoft's initial investment in OpenAI was driven more by fear of defection than pure visionary belief.

"Anthropic is planning to potentially raise money at a 1 trillion dollar valuation."

— AI Breakdown

Latent Space: The AI Engineer Podcast — "🔬Doing Vibe Physics — Alex Lupsasca, OpenAI"

Runtime: 92 min | Host: swyx, RJ Honicke, Brandon, swyx + Alessio | Guest: Alex Lupsasca (Fellow at OpenAI & Professor at Vanderbilt University)

Who should listen: Scientists, researchers, and technologists who want to understand the profound impact of advanced AI on complex problem-solving and the acceleration of scientific discovery.

Alex Lupsasca, a theoretical physicist at OpenAI, shares how GPT-3 and GPT-5 have fundamentally transformed his research process. He details instances where AI solved complex physics problems in minutes that took human experts years, generated 110 pages of novel physics research, and even discovered new proof techniques. This groundbreaking discussion highlights AI's role as a creative collaborator, pushing the boundaries of theoretical physics and challenging traditional scientific methodologies.

"When GPT5 came out, it was able to reproduce one of my best papers (that took a very long time to come up with) in 30 minutes."

— Alex Lupsasca, Fellow at OpenAI & Professor at Vanderbilt University

The AI Daily Brief: Artificial Intelligence News and Analysis — "Who Cares About Consumer AI"

Runtime: 31 min | Host: NLW | Guest: Host-led discussion

Who should listen: Product managers, investors, and entrepreneurs weighing the opportunities and challenges in the consumer AI market versus the enterprise space.

NLW examines the puzzling shift away from consumer AI in the industry, despite its rapid user growth (ChatGPT now rivals TikTok). He discusses why major players like OpenAI prioritize enterprise and code generation, even shutting down consumer projects due to compute allocation choices. The episode explores the viability of consumer AI business models, such as advertising, and addresses skepticism from figures like Jamie Dimon regarding free AI offerings.

"It's not clear to me how consumer is going to play out. A lot of you probably use Gemini, you can use it for free and that may be completely sufficient for your requirements."

— Jamie Dimon, CEO of JP Morgan

The AI Daily Brief: Artificial Intelligence News and Analysis — "Surprise Elon Anthropic Team Up Reshapes the AI Race"

Runtime: 31 min | Host: Nathaniel Whittemore | Guest: Host-led discussion

Who should listen: AI industry watchers, strategists, and investors keen on understanding the shifting alliances and infrastructure plays defining the future of the AI race.

This episode reveals a surprising partnership between Anthropic and SpaceX, where Anthropic gains critical access to SpaceX's Colossus 1 data center to alleviate its compute crunch. Nathaniel Whittemore details Elon Musk's strategic pivot from AI model challenger to infrastructure provider, influenced by Tesla's AV challenges and a long-standing feud with Sam Altman. The alliance signals a new phase where access to substantial compute becomes a primary differentiator, not just model superiority.

"musk has compute capacity but a meh model. And Anthropic has a fantastic model with weak capacity. And thus a new alliance is born."

— Nathaniel Whittemore, Host of The AI Daily Brief