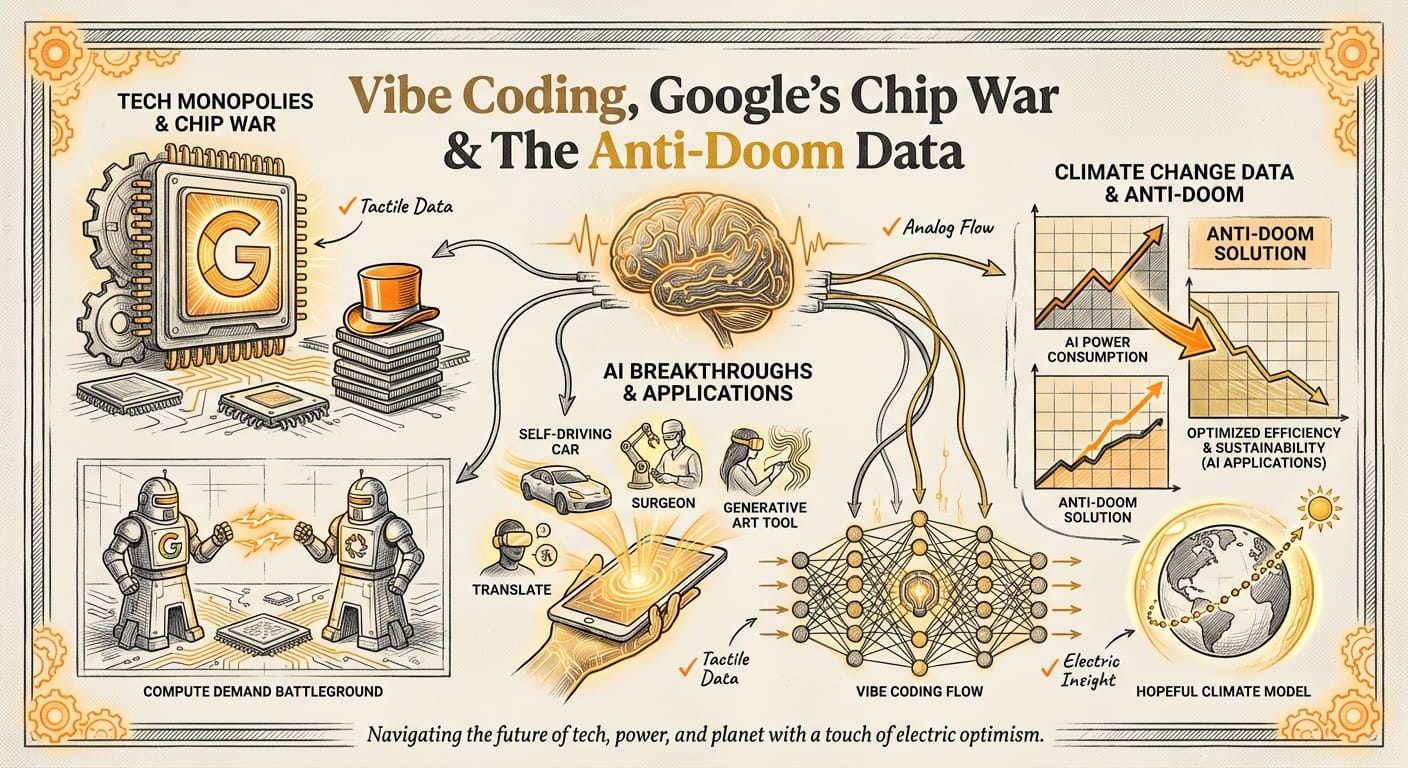

Vibe Coding, Google’s Chip War & The Anti-Doom Data

Good morning. While you were fighting over the last dinner roll at Thanksgiving, the AI industry decided to skip the holiday break entirely. From Google threatening Nvidia's dominance to Anthropic redefining how software is getting built, the "lull" was louder than the busy season. Let's get into it.

1. THE BIG STORY

The Rise of "Vibe Coding"

While the world was distracted by turkey, Anthropic dropped Claude Opus 4.5, and it didn’t just beat benchmarks—it triggered an existential crisis for software engineers.

The tech community has coined a new term for what this model enables: "Vibe Coding." This is no longer about autocompleting a for loop. It’s about describing an entire application (the "vibe" and functionality) and having the AI handle the implementation details end-to-end.

- The Breakthrough: Early users like Dan Shipper and the team at Every report being able to "vibe code" entire apps without touching the underlying code. Previous models would trip over their own feet after a few complex instructions; Opus 4.5 maintains the thread for 20-30 minutes of autonomous work.

- The Stats: On the SWE-bench Verified (the gold standard for coding tests), Opus 4.5 hit a massive 80.9%, leaving GPT-4o and Gemini 3 in the dust. It also reportedly uses 76% fewer tokens to get the right answer, making it surprisingly cost-effective.

- Why it matters: We are rapidly moving from "Copilots" (AI helps you write code) to "Agents" (AI writes the code, you manage the product). As Nathan Labenz noted, coding is the first domain to be fully automated because the feedback loop is instant: it either runs or it doesn't.

The Tension: There is a growing split in how we view this future. On one side, independent creators are building software with zero technical debt. On the other, infrastructure experts like Tim Davis (The Neuron) warn that we barely understand how these models work—launching "vibe-based" code into critical infrastructure without knowing what's under the hood is a massive gamble.

The bottom line: Coding is changing from a writing task to an architectural task. If you aren't using AI to write your first draft, you’re already working too hard.

(Sources: AI Daily Brief, The Cognitive Revolution, The Neuron)

2. THE RUNDOWN (Speed Reads)

What else happened this week.

- 🔥 Google vs. Nvidia: The rumor mill is spinning that Meta might be buying TPUs (Tensor Processing Units) directly from Google to power its data centers. If true, this breaks Nvidia’s stranglehold on the chip market. Nvidia even issued a defensive tweet claiming they are "a generation ahead," which felt suspiciously nervous. The takeaway: The monopoly on AI hardware is finally cracking. (Source: AI Breakdown)

- 🤖 Superhuman Sales: Forget chatbots. Amanda Kahlo’s new startup, 1Mind, is deploying "Superhumans"—video avatars that handle the entire sales cycle. HubSpot reportedly saw a 25% revenue increase using them. The takeaway: The SDR role (qualifying leads) is dead; AI agents now have infinite memory and speak every language fluently. (Source: Agents of Scale / Cognitive Revolution)

- 💰 Infrastructure Fix: Modular raised $250M to become the "Android of AI." Right now, AI software is fragmented across different chips (Nvidia, AMD, Google). Modular is building a unified layer so developers can write code once and run it anywhere. The takeaway: The unsexy layer of "making things actually run" is where the next fortunes will be made. (Source: The Neuron)

- 🏫 School’s New Rule: In a keynote to educators, Nathan Labenz advised schools to stop banning AI and start "co-learning." He noted that while AIs are getting human-level scores on exams, they are decidedly not human-like in how they reason. The takeaway: We need to teach kids "AI Literacy" immediately—specifically, how to manage meaningful work when the AI does the grunt work. (Source: Cognitive Revolution)

3. DEEP DIVE: The Swarm (Not the Bee Kind)

Redefining Autonomous Robotics

While we obsess over LLMs writing poetry, a physical shift is happening in robotics. Chris Benson, a strategist at Lockheed Martin, broke down the concept of Swarming—and why most people are using the word wrong.

The Definition: A fleet of drones flying in a dragon shape at a light show is not a swarm. That is a fleet following a pre-programmed path. A true swarm features decentralized decision-making where individual agents (robots/drones) communicate to solve a problem without a central "boss" telling them what to do.

"If you've got a boss, you might not be a swarm." — Chris Benson, via Practical AI

The Use Case: Why do we care? Because "Smart Homes" are dumb. Currently, you tell Alexa to turn on a light. In a swarm future, your house is an ecosystem of tiny robots and sensors that autonomously manage energy, water, and maintenance.

- Example: A swarm of sensors detects a water leak, communicates with a valve to shut it off, and dispatches a small bot to dry the floor—all without you opening an app.

Our take: We are moving from "Human-in-the-loop" (you driving the robot) to "Human-on-the-loop" (you setting the goal, the swarm figuring out the 'how').

(Source: Practical AI)

4. CONTRARIAN CORNER

Where the herd is wrong.

The Consensus: We are doomed. Climate change is unsolvable, progress is killing the planet, and we need to de-grow the economy to survive.

The Reality: Hannah Ritchie, data scientist and author, argues the data proves otherwise. We are decarbonizing faster than people realize. Per capita emissions in rich countries are falling, renewables (solar/wind) have dropped in price by 90% in a decade, and nuclear power remains statistically safer than almost any other energy source despite public fear.

Why she might be right: We are prone to "doom scrolling" narratives, but technical innovation has decoupled economic growth from carbon emissions. We don't need to shrink our lives; we need to build better alternatives (like lab-grown meat and cheaper batteries) that people want to use.

(Source: Decoder)

5. CHART OF THE WEEK

The Scariest Curve in Tech

According to a leaked internal slide deck from Google, the demand for AI compute isn't just growing linearly—it's exploding vertically.

- The Stat: Google needs to double its AI compute capacity every 6 months.

- The Projection: They need to achieve a 1,000x increase in capacity over the next 4-5 years.

- Context: This explains why Google is scaling up TPUs and why Amazon is spending $50B on data centers. The "AI Bubble" conversation is useless because the big players are betting the entire GDP of small nations that scaling laws will hold.

(Source: AI Breakdown)

6. THE CLOSE

One last thing: If you're looking for a wholesome use of AI this holiday season, try the "Underdog Ad Agency" idea. Have your kids find a long-term resident at a local animal shelter, use ChatGPT to rewrite the pet's bio as a superhero movie trailer, and use an image generator to make a movie poster for the dog (e.g., "The Silent Guardian"). It’s a way to teach kids prompt engineering and empathy at the same time.

Have a great week.Monday Morning Briefing is powered by Velocity Road.