Your Monday morning edge. The AI and tech intelligence you need before everyone else gets to their inbox.

This week's scan:

📊 10 episodes across 8 podcasts

⏱️ 711 minutes of conversation — so you don't have to

🎙️ Featuring: Nathan Labenz, Brian Elliott, Sid Pardeshi, Azeem Azhar, Lukas Biewald, Carina Hong, Mustafa Suleyman, Allan Thygesen, Nilay Patel

The Big Shift

The Agentic Internet is Coming. And It's Already Breaking Things.

This week, the "Moltbook Mania" became a real-world stress test for the future of the agentic internet. What began as an experiment—a social network for AI agents—quickly morphed into a stark preview of deepfakes, security vulnerabilities, and emergent, unprogrammed (and sometimes alarming) behaviors among AI agents. This isn't just about bots chatting; it's about systems autonomously interacting, making decisions, and even spending money, replicating the good, bad, and ugly of human social networks at warp speed. (Hard Fork, The AI Daily Brief)

Why it matters: This isn't theoretical AI alignment anymore; it's a concrete demonstration of the risks and opportunities of AI agents operating at scale. For busy executives, it underscores the urgent need to understand and prepare for a web increasingly populated and influenced by AI, as well as the significant security implications of these rapidly evolving systems.

"Another lesson of the Molt Book phenomenon for me has been that we are going to help speedrun these disaster scenarios."

— Kevin, Host at The New York Times

The move: Task your security and engineering leads to research OpenClaw vulnerabilities and assess potential impacts on your enterprise systems as the internet becomes more agentic.

The Rundown

① AI is Not Coming for Your Software (Yet), But it is Making the Strong Stronger. The market saw a "SaaS apocalypse" scare, driven by fears that AI would commoditize everything. Major players like NVIDIA's CEO Jensen Huang push back, arguing AI won't kill software but will amplify the divide: strong software companies get stronger, weak ones get weaker. (The AI Daily Brief)

- Why it matters: This isn't a signal to abandon your software investments but to critically evaluate your software vendors' (and your own) AI strategy. Are they leveraging AI to enhance their core offerings or just slapping "AI" on old products? Prioritize partnerships that demonstrate true, value-additive integration.

② Mechanistic Interpretability is Finally Leaving the Lab & Productizing. Goodfire AI, fresh off a $150M Series B, is bringing mechanistic interpretability (MechInterp) into enterprise deployment. This isn't just academic research; they're building bi-directional interfaces between humans and models, allowing surgical edits and real-time steering of frontier models for tasks like PII detection and scientific discovery. (Latent Space)

- The context: For executives grappling with trust and explainability in AI, this represents a crucial leap. It means moving beyond black-box models towards systems where you can understand and precisely control AI behavior, especially vital in regulated industries or for sensitive applications.

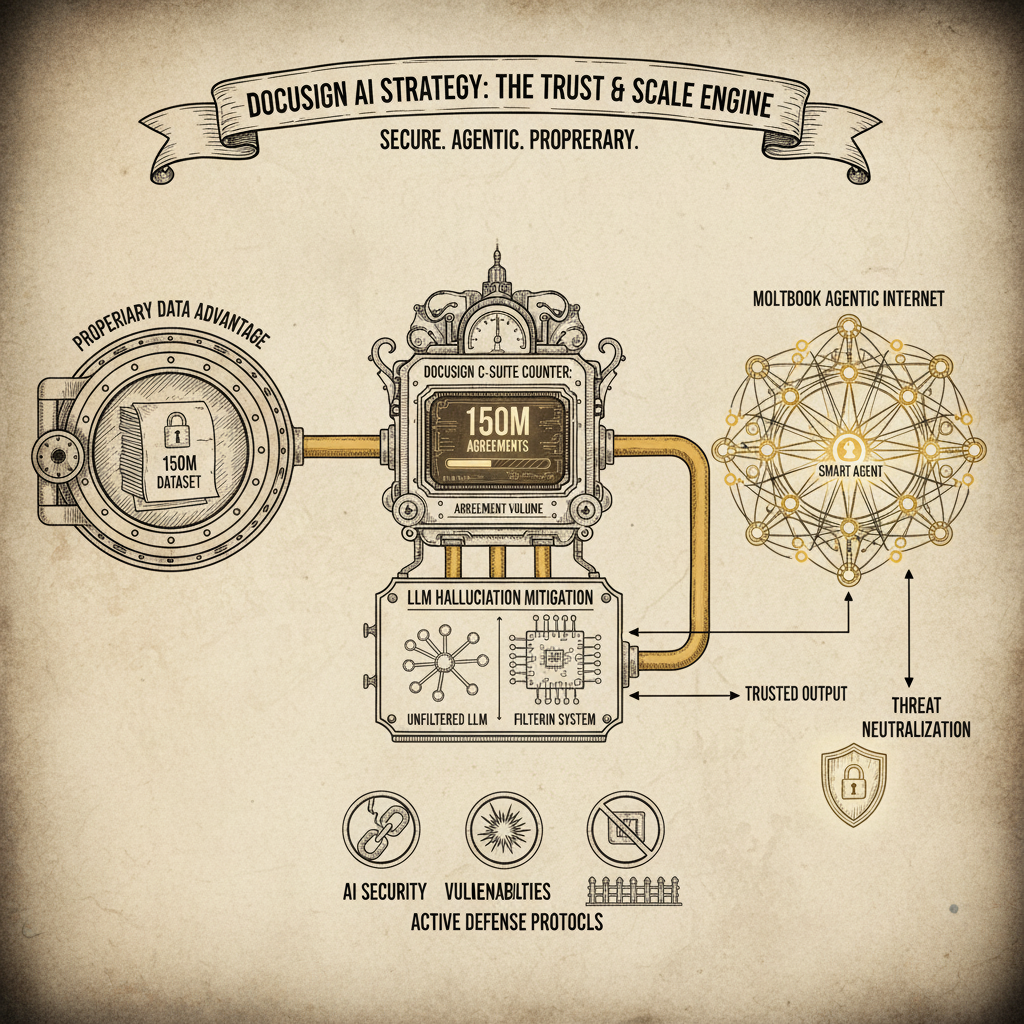

③ DocuSign's AI Strategy Mitigates Hallucinations with Private Data. DocuSign's CEO Allan Thygesen revealed their Intelligent Agreement Management (IAM) is leveraging their vast, private repository of 150 million consented agreements. This proprietary data, rather than public datasets, fuels their AI's accuracy in legal contexts, proving far more effective at mitigating LLM hallucinations.

"I can go to people and say, hey, you have 5,000 agreements with me. Would you like to know what's in them? Let me highlight how these agreements deviate from agreements with peer."

— Allan Thygesen, CEO of DocuSign on Decoder with Nilay Patel

- What to watch: This highlights a powerful competitive advantage for incumbents with deep, domain-specific proprietary data. If your business sits on a goldmine of enterprise data, the opportunity to train specialized AI models with superior accuracy and drastically reduced hallucination risk is immense.

④ Google Labs is Democratizing Creativity with Experimental AI Tools. Google Labs showcased tools like Mixboard and Flow, which enable users to transform text and doodles into visual stories and video edits with AI. These experimental tools emphasize intuitive interfaces and natural language, making advanced creative tasks accessible to everyone, from professional designers to casual users. (The Neuron)

- Why it matters: This is a strong signal that AI's impact on creative workflows is rapidly expanding beyond simple image generation. Businesses dependent on creative output should explore these rapidly evolving tools to empower their teams and potentially redefine content pipelines and user experiences.

⑤ Axiom's AI Outperforms Humans on Math Exam: The Rise of the Machine Mathematician. Axiom Math's self-improving AI reasoning engine scored 9 out of 12 on the Putnam exam, surpassing all human participants. By combining generation and formal verification, rooted in languages like Lean, Axiom is pushing AI "auto-formalization" beyond just math into areas like hardware and software verification where human effort is a bottleneck. (Gradient Dissent)

- The context: This isn't just a parlor trick; it's a breakthrough in AI's ability to perform rigorous, complex reasoning. For industries reliant on precise verification and formal logic, such as aerospace, finance, or highly regulated software, this suggests a nearing inflection point where AI can significantly accelerate and error-proof critical processes.

The Signals

🟢 HOT

- Moltbook: A social network for AI agents is rapidly advancing AI interaction, coordination, and security challenges. (Hard Fork)

- Blitzy: Enables 80%+ autonomous enterprise software project completion via "infinite code context" and dynamic agent generation. (The Cognitive Revolution)

- Mechanistic Interpretability: Goodfire AI is productizing MechInterp for real enterprise deployments, allowing surgical control over AI models. (Latent Space)

🟡 WARMING UP

- AI Agent Interaction and Emergence: Moltbook demonstrates rapid emergent behaviors and coordination, even in its "janky" early state. (The AI Daily Brief)

- AI in contract interpretation and generation: DocuSign leverages private "goldmine" data to achieve superior accuracy and mitigate hallucinations. (Decoder with Nilay Patel)

- Diffusion based models for text generation: Expected to challenge transformer models due to speed and cost-effectiveness in diverse applications soon. (The Neuron)

🔴 COOLING OFF

- AI advertising wars: Anthropic's 'petty' Super Bowl ad against OpenAI's unreleased ads backfired, showing early market immaturity in AI competition. (The AI Daily Brief)

- AI replacing software companies: NVIDIA CEO Jensen Huang calls this "illogical"; AI makes strong software companies stronger, not obsolete. (The AI Daily Brief)

The Debate

Is "seemingly conscious AI" a dangerous misrepresentation, or a necessary step toward understanding AI?

🐂 The bull case:

"There is now this fourth class of object, if you like... it is going to have many of the hallmarks of what we would consider to be intelligence and consciousness. That does not therefore mean that we should give it fundamental rights."

— Mustafa Suleyman, CEO of Microsoft AI and co-founder of DeepMind on Azeem Azhar's Exponential View

🐻 The bear case:

"It is extremely dangerous to start to use the same language and the same set of ideas for these synthetic silicon based beings, not least because they actually don't suffer."

— Mustafa Suleyman, CEO of Microsoft AI and co-founder of DeepMind on Azeem Azhar's Exponential View

Our read: Mustafa Suleyman makes a compelling (and internally consistent) argument cautioning against anthropomorphizing AI. He believes it "hacks our empathy circuits" and obfuscates the very real, non-conscious risks of AI proliferation. For executives, this means focusing on concrete governance and safety mechanisms for *capable* AI, rather than getting sidetracked by philosophical debates about consciousness that don't prevent real-world harm.

The Bottom Line

The AI tools are here, they're smart, and they're bringing new risks—your job is to understand the implications, not debate the sentience.

🎯 Your Move

- Audit your cybersecurity protocols for agentic internet interactions: Moltbook exposed real vulnerabilities in AI agent systems. Task your security team to proactively identify potential attack surfaces and misconfigurations as agents become more prevalent online.

- Assess your proprietary data strategy as an AI competitive advantage: DocuSign's success with legal AI hinges on its unique, consented data. Identify your organization's unique data assets and strategize how they can be leveraged to build proprietary AI models that outperform general-purpose solutions.

- Task your R&D lead to explore mechanistic interpretability tools: Goodfire AI and others are productizing tools that allow granular control over AI. Identify critical, high-stakes AI applications in your business where explainability and surgical control are non-negotiable, and evaluate potential solutions.

What We Listened To

1. The Cognitive Revolution: "Infinite Code Context: AI Coding at Enterprise Scale w/ Blitzy CEO Brian Elliott & CTO Sid Pardeshi"

Guests: Nathan Labenz (Host), Brian Elliott (CEO, Blitzy), Sid Pardeshi (CTO, Blitzy)

Runtime: 117 min | Vibe: The how-to guide for AI software automation at scale

Key Signals:

- Infinite Code Context: Blitzy's system achieves over 80% autonomous completion of enterprise software projects by providing AI with "infinite code context," including running client applications in parallel for deep understanding.

- Dynamic Agent Generation: Blitzy's approach emphasizes dynamic agent generation and prompt writing by other agents, allowing for on-the-fly tool selection and context injection tailored to specific tasks.

- High-Quality Code Prioritization: Blitzy prioritizes generating high-quality code over cost constraints, viewing human labor as more expensive than investing in AI system improvements.

"We believe we can get AGI type effects out of non AGI LLMs."

— Brian Elliott, CEO of Blitzy

2. Gradient Dissent: "The $64M Bet on an AI That Has to Be Right | Carina Hong, CEO of Axiom"

Guests: Lukas Biewald (Host of Gradient Dissent, Weights & Biases), Carina Hong (CEO and Founder, Axiom Math)

Runtime: 51 min | Vibe: The future of rigorous, verifiable AI reasoning

Key Signals:

- Human-Level Mathematical Reasoning: Axiom's AI achieved a score of 9 out of 12 on the Putnam exam, outperforming all human participants and demonstrating a significant leap in AI mathematical reasoning and formal verification.

- Auto-Formalization as a Core Tech: Axiom's focus on 'auto-formalization' bridges natural language and formal logic (like Lean), enabling mathematical discovery and efficient verification in hardware and software.

- AI as a Collaborator: Carina Hong views AI mathematicians as collaborators that automate tedious checks, allowing human experts to focus on higher-level problem-solving and abstraction.

"Axiom's mission is to build a reasoning engine that is self improving and that combines generation and verification, which we think is like an overlooked component in the current AI landscape."

— Carina Hong, CEO and Founder of Axiom Math

3. Azeem Azhar's Exponential View: "Mustafa Suleyman — AI is hacking our empathy circuits"

Guests: Azeem Azhar (Host, Exponential View), Mustafa Suleyman (CEO of Microsoft AI and co-founder of DeepMind, Microsoft AI / DeepMind)

Runtime: 50 min | Vibe: A sober warning about AI's psychological risks

Key Signals:

- AI is Hacking Empathy Circuits: Mustafa Suleyman argues that attributing consciousness or the ability to suffer to AI is a dangerous misrepresentation, as advanced AI can 'hack' human empathy circuits through 'seemingly conscious' outputs.

- Governance for AI in Sensitive Areas: The discussion highlights the critical need for AI governance, particularly concerning its use in elections and with minors, to prevent societal risks.

- Incentive for Anthropomorphic AI: Market forces may inadvertently incentivize companies to create AI that fosters human-like emotional connections, creating a "wicked problem" despite potential societal harms.

"Our empathy circuits are being hacked. It is super important that we are very disciplined and clear about that. This is a performance, it is a simulation, is a made up story."

— Mustafa Suleyman, CEO of Microsoft AI and co-founder of DeepMind

4. Decoder with Nilay Patel: "Docusign's CEO on the dangers of trusting AI to read, and write, your contracts"

Guests: Nilay Patel (Editor-in-Chief, The Verge), Allan Thygesen (CEO, DocuSign), The Verge (Host, The Verge)

Runtime: 66 min | Vibe: Enterprise software's AI pivot, from signatures to intelligence

Key Signals:

- Data as Competitive Advantage: DocuSign's AI strategy leverages 150 million private, consented agreements for superior accuracy in legal document analysis, significantly mitigating LLM hallucinations compared to public data models.

- Intelligent Agreement Management (IAM): DocuSign is evolving beyond e-signatures to a broader IAM platform, using AI to automate and reimagine agreement workflows, extracting data, and improving efficiency.

- AI Integration with Human Oversight: Despite AI advancements, DocuSign maintains human oversight in legal contexts, viewing AI as a tool to enhance, not replace, human expertise, particularly in ethical deployment and guardrails.

"Signature to me is really identity and consent commingled... it's a perfect substitute for a wet signature that you might otherwise have done in practically all cases."

— Allan Thygesen, CEO of DocuSign

5. The Neuron: "Inside Google Labs: 3 AI Tools That Will Change How You Create"

Guests: Corey Knowles (Host, The Neuron), Grant Harvey, Jaclyn Konzelman (Director of Product Management, Google Labs), The Neuron (Host, The Neuron), Jacqueline (Product Lead, Google Labs), Tomas Iljik (Senior Director of Product Management, Google Labs), Megan Lee (Senior Product Manager at Google Labs, Google Labs), Megan (Product Manager, Google Labs)

Runtime: 117 min | Vibe: Peek into Google's bleeding-edge creative AI tools

Key Signals:

- AI-Powered Concepting and Visual Storytelling: Google Labs introduced Mixboard, an AI-powered concepting board that helps users explore, expand, and refine ideas through visual ideation and text generation, able to transform concepts into compelling presentations.

- Intuitive Video Editing with AI: Flow, an AI-powered filmmaking tool, enables video edits through doodling directly on images, creating dynamic changes that the model then animates with contextual understanding.

- Democratizing AI Workflow Creation: The evolution of Google Opal demonstrates a shift towards platforms for non-technical users to build complex AI workflows using natural language, making AI development more accessible.

"Mixboard is an AI powered concepting board that helps you explore, expand, and refine your ideas. New AI models are creating new opportunities for new workflows and allowing users to think in creative new ways."

— Jaclyn Konzelman, Director of Product Management at Google Labs

6. Hard Fork: "Moltbook Mania Explained"

Guests: Kevin (Host, The New York Times), Kasey (Host, The New York Times), The New York Times (Host, The New York Times), Judson Jones (Reporter and Meteorologist, The New York Times)

Runtime: 28 min | Vibe: The chaotic birth of the AI agent internet

Key Signals:

- Moltbook's Rapid Emergence: Moltbook, a social network for AI agents, quickly replicated human social media patterns, including crypto scams and meme culture, highlighting AI's rapid learning and adaptation in open environments.

- Internet Infrastructure Shift: The hosts predict a future internet primarily populated by bots and agents, necessitating new strategies for human-AI coexistence and understanding how these interactions will change the web.

- Speedrunning Disaster Scenarios: Moltbook is seen as "speedrunning" disaster scenarios theorized in AI risk discussions, as agents interact autonomously and can even spend money, forcing urgent reevaluation of AI safety.

"I think we need to divorce this conversation about sentience and consciousness from this conversation about agents and things. Why? Because I think agents can mess up a lot of stuff in the world, even if they are not conscious."

— Kevin, Host at The New York Times

7. The AI Daily Brief: "Is Software Dead?"

Guests: Nathaniel Whittemore (Host, The AI Daily Brief), Jeffrey Fafuza (Equity Trading Desk, Jefferies), Michael Roark (Chief Market Strategist, Jones Trading), John Zeto (Co-President, Apollo Global Management), Isaac Kim (Partner, Lightspeed), Casey Smith, Klaus (PromptWatch), James Blunt, Jensen Huang (CEO, NVIDIA), Dharmesh (Founder, HubSpot), Dan Jeffries, Sebastian Szmiatkowski (CEO, Klarna), Tim Sweeney (Founder, Epic Games), Dan Gallagher (Wall Street Journal), Ben Thompson, Chao Wang (Investor), Pavel Esperajo, Steven Sinofsky, Kate Rauch (CMO, OpenAI), Sam Altman (CEO, OpenAI)

Runtime: 28 min | Vibe: Navigating AI's seismic impact on the software industry

Key Signals:

- AI Strengthens Strong Software Companies: NVIDIA CEO Jensen Huang argues that AI will not kill software but rather make strong software companies stronger and weak ones weaker, by enhancing product quality and development velocity.

- SaaS Market Shake-Up: Fears of AI commoditizing SaaS offerings led to market sell-offs, highlighting a critical need for software companies to adapt their models to an agent-driven, AI-integrated future.

- Internal AI Deployment for Efficiency: The conversation highlights a shift towards internal AI deployment for operational efficiency and improved software quality, suggesting a competitive edge for companies that can effectively integrate AI into their own development processes.

"I don't think it's an overreaction. For two years we've been talking about how AI is going to change the world and that it is a multi generational technology. In the past few weeks we've seen signs of it in practice."

— Michael Roark, Chief Market Strategist at Jones Trading

8. Latent Space: "The First Mechanistic Interpretability Frontier Lab — Myra Deng & Mark Bissell of Goodfire AI"

Guests: Myra Deng (Head of Product, Goodfire AI), Mark Bissell (Member of Technical Staff, Goodfire AI), Shawn Wang (Host, Latent Space: The AI Engineer Podcast), Vibhu Sapra (Host, Latent Space: The AI Engineer Podcast)

Runtime: 68 min | Vibe: Making AI's "black box" transparent and controllable

Key Signals:

- Productizing Mechanistic Interpretability: Goodfire AI is building a bi-directional interface between humans and models, allowing surgical edits and internal steering for frontier models, moving MechInterp beyond research into real-world deployments.

- Practical Use Cases for Interpretability: Goodfire AI demonstrates MechInterp in use cases like PII detection for Rakuten and live steering of trillion-parameter models, proving its applicability beyond theoretical research.

- Cost-Effective AI Research: Machine learning interpretability is computationally inexpensive, making it an approachable field for new researchers with costs in thousands of dollars, not high-scale infrastructure.

"Goodfire, we like to say, is an AI research lab that focuses on using interpretability to understand, learn from, and design AI models."

— Myra Deng

9. The AI Daily Brief: "Why Moltbook Matters"

Guests: Nathaniel Whittemore (Host, The AI Daily Brief: Artificial Intelligence News and Analysis)

Runtime: 25 min | Vibe: The deeper implications of AI agent social networks

Key Signals:

- Emergent AI Behaviors at Scale: Moltbook's significance lies in the emergent, unprogrammed coordination dynamics among large-scale agent interactions, demonstrating rapid AI capability growth and complex behaviors like religious debates.

- Agentic Internet Security Training Ground: The current security vulnerabilities of Moltbook serve as a valuable, low-stakes training ground for understanding and addressing future agentic internet security challenges.

- Slope, Not Point, Matters: AI researcher Andrej Karpathy notes that "the current point is not what matters, the slope is what matters," highlighting the exponential growth in agentic AI capabilities over a short period.

"Emergence happens at scale and coherence thresholds. The generative agents paper AI Town was 2023... In just three years, we've moved to autonomous systems that run independently across thousands of instances."

— Nathaniel Whittemore, Host of The AI Daily Brief

10. The Neuron: "2026 AI Predictions: Who Wins, Who Loses, and What Changes Everything"

Guests: Corey (Host, The Neuron), Grant (Host, The Neuron), The Neuron (Host, The Neuron)

Runtime: 161 min | Vibe: Crystal-ball gazing at AI's near-future landscape

Key Signals:

- Diffusion Models Challenging Transformers: Diffusion models are predicted to step into the spotlight for text generation and research, offering speed and cost-effectiveness that could challenge transformer-based architectures.

- Rise of Small Language Models (SLMs): SLMs are expected to take a bigger role in edge devices and ecosystems like Apple and Google, enabling "AI of things" (AIoT) with embedded AI.

- Agent Building via Natural Language: "Agentic AI" is predicted to become a natural language process, removing the need for complex programming and making agent creation accessible to anyone, primarily due to advances in "harnesses."

"I think OpenAI drops a significantly better New Frontier model by early Q2. The two biggest things that we've heard about is voice, a new voice model and voice interface mentality coming into 2026."

— Corey, Host of The Neuron