The AI agent market is hitting its inflection point—but the real battle is in distributed cognition, not just massive models.

The Intake

📊 12 episodes across 7 podcasts

⏱ 747 minutes of intelligence analyzed

🎙 Featuring: Corey Knowles (The Neuron: AI Explained), Grant Harvey (The Neuron: AI Explained), Nick Heiner (Surge AI)

The Big Shift

The Internet of Cognition is Coming: AI's Future is Distributed, Not Just Scaled

The dominant narrative in AI has long been about ever-larger models and centralized intelligence. However, a significant pivot is underway, shifting focus from vertical scaling to horizontal, distributed AI systems. This new paradigm, dubbed the Internet of Cognition 🆕 by Vijoy Pandey 🆕 (SVP and GM, Outshift by Cisco), envisions AI agents collaborating and sharing context across a decentralized network, much like human societies evolved with language. This re-framing challenges the idea that a few colossal models will solve everything and instead points to an ecosystem of specialized agents working together.

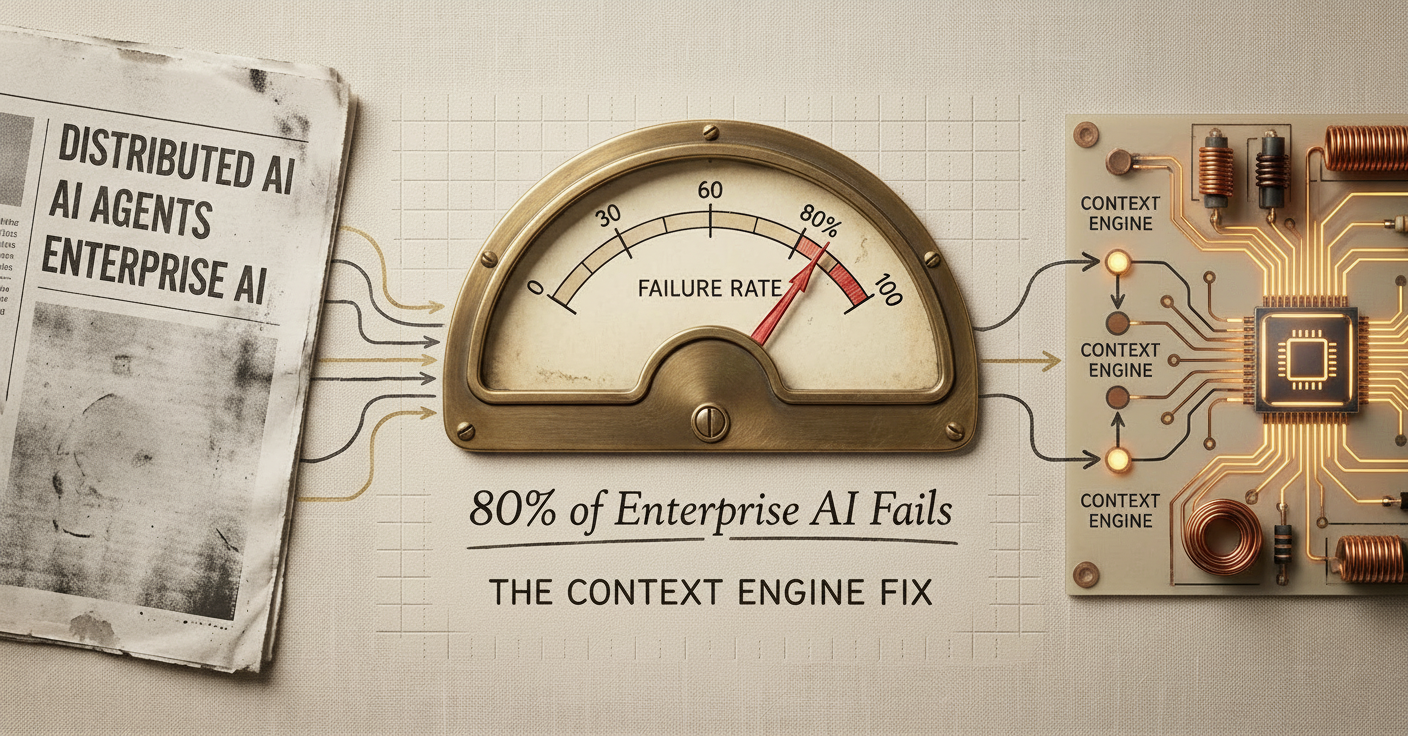

Why it's happening: The limitations of traditional AI models operating in isolation are becoming clear. Even the best frontier models struggle with basic real-world tasks 40% of the time, particularly those requiring planning and adaptability outside academic constraints (Grant Harvey on The Neuron: AI Explained). This points to a fundamental gap: AI agents often lack the contextual understanding of an organization's legacy systems and internal processes, leading to an 80% failure rate in complex enterprise tasks (Eran Yahav, CTO of Tabnine, on The AI in Business Podcast). A move to context-aware, collaborative agents is a direct response to these limitations.

The new architecture: Instead of monolithic AI, the future involves what Cisco 🆕 is calling "Tool, Task, and Transaction-Based Access Control" (TTTBAC 🆕) where privileges for agents are ephemeral and granted based on specific actions (Vijoy Pandey on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis). This contrasts with the current "internet of information" and moves towards an "internet of intent," where agents discern and act on each other's purposes (Nathan Labenz on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis). This shift is not just technical; it has profound implications for how businesses structure their AI strategies, moving towards "agent-native" architectures that enable seamless AI interaction (Dan Shipper, CEO of Every, on The Neuron: AI Explained).

"What's missing, Vijoy says, is the Internet of Cognition higher order protocols that AI agents need to share context, understand one another's intent, build reputation and establish trust and ultimately solve problems in shared spaces."

— Nathan Labenz, Host on The Cognitive Revolution

The move: Start thinking about how your organization can transition from siloed AI applications to a more interconnected, collaborative agent ecosystem. This means not just adopting AI, but designing for agent-to-agent communication and trust protocols from the ground up.

The Rundown

① Specialized AI Models Are Disrupting General Purpose AI. Vertical AI models, post-trained with real-world interaction data, are now outperforming and are cheaper than leading frontier models like GPT 5.4 and Opus 4.6. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

→ Why it matters: This challenges the "Bitter Lesson" of AI and means many successful companies will need to be full-stack, owning the app, AI, and model layers for true differentiation. If vertical SaaS is your game, you're sitting on untapped fine-tuning assets.

② Agent-Native Architecture is the New North Star for Software Development. Designing applications for seamless interaction with AI agents, rather than human users, allows for flexible and unforeseen use cases, moving beyond predefined programmatic recipes. (Dan Shipper, CEO of Every, on The Neuron: AI Explained)

→ What to watch: This means reimagining software where AI is the primary author and user, making it critical for companies to become "agent-native" to fully leverage AI advancements. Dan Shipper noted that "The bug reports you get from agents are way better than the bug reports you get from humans" during their discussion on The Neuron: AI Explained.

③ AI Inference Costs Are Skyrocketing, Demanding New Budgeting Strategies. NVIDIA's Jensen Huang believes the "inference inflection has arrived," with token consumption increasing by orders of magnitude as agentic AI systems become more prevalent. (Azeem Azhar on Azeem Azhar's Exponential View)

→ The context: Companies need to prepare for centralizing token budgets as a significant cost center, potentially allocating substantial token budgets alongside engineer salaries to enable optimal performance. Azeem Azhar highlighted that "The atomic unit of manufactured intelligence of AI is the token" on Azeem Azhar's Exponential View.

④ The Rise of the Context Engine for Enterprise AI Success. AI agents fail in an estimated 80% of complex enterprise tasks due to a lack of organizational understanding, highlighting the critical need for a "context engine" to provide internal knowledge and legacy system integration. (Eran Yahav, CTO of Tabnine, on The AI in Business Podcast)

→ Why it matters: Without this "brain" for AI, success rates for complex tasks remain low, token costs are inefficient, and reliable agent operation within complex software architectures is impossible. Eran Yahav stated "If you give agents that level of contextual information, you will see success rate going significantly higher" during their discussion on The AI in Business Podcast.

The Signals

🔥 Heating Up

• AI inference: Demand for AI compute is infinite, shifting from training to inference, driving NVIDIA's trillion-dollar order book. (Azeem Azhar on Azeem Azhar's Exponential View)

• Agent-to-agent communication: Essential for distributed AI systems to collaborate, requiring higher-order protocols akin to human language. (Vijoy Pandey on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis)

• Enterprise Context Engine: Addresses the 80% failure rate of AI agents in complex tasks by providing organizational knowledge. (Eran Yahav on The AI in Business Podcast)

👀 On Watch

• Cisco 🆕: Driving the vision for an "Internet of Cognition," focusing on horizontal scaling and distributed AI systems. (Vijoy Pandey on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis)

• Vijoy Pandey 🆕: Cisco SVP and GM, championing the "Internet of Cognition" framework for scaling AI. (Vijoy Pandey on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis)

• Predictive Procurement 🆕: AI-driven models proactively making offers to suppliers, rather than reactive quoting, to address geopolitical volatility. (Edmund Zagorin on The AI in Business Podcast)

• Every 🆕: Building agent-native software like co-creation document apps, highlighting the shift in software architecture. (Dan Shipper on The Neuron: AI Explained)

🧊 Cooling Off

• General LLMs in specialized tasks: Vertical AI models outperform general models in specific domains, making reliance on broad LLMs for niche tasks suboptimal. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

• Monolithic AGI efforts: OpenAI's pivot from Sora due to compute constraints signals that a singular, grand AGI might be less feasible than distributed, specialized intelligence. (Nathaniel Whittemore on The AI Daily Brief: Artificial Intelligence News and Analysis)

The Bottom Line

The future of AI is less about building one all-knowing brain and more about engineering an ecosystem where specialized, context-aware agents can seamlessly collaborate and act.

📖 Want the full episode breakdowns, guest details, and listen links?

Episode Guide (Web Version)

1. The Neuron: AI Explained — "Inside the Secret Labs Where AI Learns to Work"

Runtime: 63 min | Guests: Corey Knowles (Host, The Neuron), Grant Harvey (Host, The Neuron), Nick Heiner (Head of RL Environments, Surge AI)

Listen if: You're grappling with real-world AI deployment and the gap between academic benchmarks and enterprise task failure rates.

Nick Heiner from Surge AI breaks down why frontier models still fail 40% of the time on workplace tasks, highlighting the critical role of reinforcement learning environments in training AI agents. The discussion underscores the need for realistic training data and why robust evaluation sets are make-or-break for AI effectiveness.

"Even the best Frontier models fail about 40% of the time on workplace tasks, with failures clustering around planning, adaptability, groundedness and common sense."

— Grant Harvey, Host of The Neuron

Connects to: The Big Shift (real-world AI failure rates), The Rundown (context engine for enterprise AI success)

2. The AI Daily Brief: Artificial Intelligence News and Analysis — "How to Use Claude's Massive New Upgrades"

Runtime: 26 min | Guests: Nathaniel Whittemore (Host, The AI Daily Brief: Artificial Intelligence News and Analysis), Ethan Malik (AI Researcher and Author)

Listen if: You're curious about how advanced AI agents are transforming daily workflows and team productivity.

Nathaniel Whittemore explores Claude's significant upgrades, including advanced file management, integrated Excel/PowerPoint add-ins, and full computer control capabilities. These features mark a shift from AI as a tool to an execution layer, enabling small teams to achieve unprecedented productivity.

"Claude remote control is extremely nice, can edit on macOS or iOS and Claude app on my production server from anywhere. He basically compared it favorably to an SSH session, which would be another, more technically complex way to log into your local device to control it while on the go."

— Peter Levels

Connects to: The Rundown (agent-native architecture), The Signals (Claude, OpenClaw)

3. The AI in Business Podcast — "Why Enterprise AI Fails Without a Context Engine - with Eran Yahav of Tabnine"

Runtime: 31 min | Guests: Daniel Faggella (CEO and Head of Research, Emerj Artificial Intelligence Research), Eran Yahav (CTO and Co-founder, Tabnine)

Listen if: You're wondering why your enterprise AI pilots aren't delivering, especially in complex environments.

Eran Yahav explains the 80% failure rate of AI agents in complex enterprise tasks, attributing it to a lack of organizational understanding. He introduces the "context engine" as a vital solution, acting as a brain to provide agents with necessary knowledge, reducing token costs, and boosting success rates.

"AI agents are failing in around 80% of those cases (complex tasks). The reason, the underlying reason or the main reason is that they just do not understand the organization well enough."

— Eran Yahav, CTO and Co-founder at Tabnine

Connects to: The Big Shift (context engine), The Rundown (enterprise AI failure rates)

4. Azeem Azhar's Exponential View — "What NVIDIA’s bet on OpenClaw means for the future of AI and your token budget"

Runtime: 37 min | Host: Azeem Azhar (Host, Exponential View)

Listen if: You need to understand the financial implications of AI inference and token consumption for your budget.

Azeem Azhar details the shift from AI training to inference, where the token is the atomic unit of AI intelligence. He discusses NVIDIA's massive order book, the transformative potential of OpenClaw, and the dramatic increase in personal token consumption, urging companies to develop a clear "open claw strategy" and manage exploding token budgets.

"Jensen revealed something far more important in his keynote: 'the inference inflection has arrived,' and this is about to transform how all companies should manage their budgets."

— Azeem Azhar, Host of Exponential View

Connects to: The Rundown (AI inference costs), The Signals (AI inference, token budget governance)

5. The AI Daily Brief: Artificial Intelligence News and Analysis — "Anthropic Accidentally Revealed Their Most Powerful Model Ever"

Runtime: 28 min | Host: Nathaniel Whittemore (Host, The AI Daily Brief)

Listen if: You're evaluating whether general LLMs are still the best fit for your specific business needs or if specialized models are the future.

Nathaniel Whittemore discusses how specialized vertical AI models, trained on real-world usage data, can now outperform and are cheaper than leading frontier models. This development challenges the "bitter lesson" of AI and signals a potential shift where differentiation will move to the model layer, with significant implications for major AI labs.

"It means that vertical models can and will outperform general models. It means that many successful companies in the future will need to be full stack, app layer, AI layer and model layer."

— Paul Adams, Chief Product Officer at Intercom

Connects to: The Rundown (specialized AI models), The Signals (vertical AI models vs. General LLMs)

6. "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — "Your Agent's Self-Improving Swiss Army Knife: Composio CTO Karan Vaidya on Building Smart Tools"

Runtime: 99 min | Guests: Karan Vaidya (CTO, Composio), Nathan Labenz (Host, The Cognitive Revolution)

Listen if: You're building or integrating AI agents and need to understand the latest in tool execution and continuous learning for agents.

Karan Vaidya introduces Composio, a "smart tool" platform that enables AI agents to access over 50,000 tools across 1,000+ apps through a single, agentic execution layer. He highlights Composio's continual learning processes, which generate and deploy improved tool versions in real-time, and how strong tooling can help developers avoid model lock-in.

"So you're on point in understanding the problem that we solve, that we provide thousand plus apps, 50,000 plus tools to your agents, to anybody building agents. But that's not the like the final solution. Because if at this point where like in the LLM journey we are, if you provide thousand tools to the agent, it will probably use the wrong blade and suicide via context overload."

— Karan Vaidya, CTO of Composio

Connects to: The Rundown (agent-native architecture), The Signals (Composio, skills as an abstraction layer)

7. The AI in Business Podcast — "What Global Tariff Uncertainty Means for Supply Chain Leaders - with Edmund Zagorin of Arkestro and Michael Shin of Trinity Rail Industries"

Runtime: 46 min | Guests: Edmund Zagorin (Founding Chief Strategy Officer, Arkestro), Mike Shin (Chief Supply Chain Officer, Trinity Rail Industries), Daniel Faggella (CEO and Head of Research, Emerj)

Listen if: You're a supply chain leader navigating geopolitical volatility and seeking AI solutions for proactive procurement.

Edmund Zagorin and Mike Shin discuss how AI-driven "predictive procurement" can help supply chains balance speed, cost, capacity, and risk amidst global tariff uncertainty. They emphasize AI's role in proactive sourcing, automated contract analysis, and improved supplier collaboration, moving beyond reactive quoting.

"Supply chains today are in a double bind where they have to be extremely fast, extremely efficient, especially cost efficient. But also if they can't be agile as well and build in that resiliency and capacity, then when the world changes, people can get caught short."

— Edmund Zagorin, Founding Chief Strategy Officer at Arkestro

Connects to: The Signals (Predictive Procurement, geopolitical risk)

8. The AI Daily Brief: Artificial Intelligence News and Analysis — "Work AGI is the Only AGI that Matters"

Runtime: 26 min | Host: Nathaniel Whittemore (Host, The AI Daily Brief)

Listen if: You're tracking OpenAI's strategic shifts and the broader market implications for "work-focused" AI.

Nathaniel Whittemore covers OpenAI's strategic pivot away from projects like Sora due to compute constraints, refocusing on coding and knowledge work. The company's 'AGI Deployment' division and upcoming 'Spud' model signify a strong commitment to work-focused AI, challenging the concept of a singular, all-encompassing AGI.

"OpenAI has renewed focus on coding and knowledge work from OpenAI. Sam Altman told staff on Tuesday that he would be changing and in some many ways reducing his role."

— Nathaniel Whittemore, Host of The AI Daily Brief

Connects to: The Signals (OpenAI's strategic shift), The Big Shift (monolithic AGI vs. distributed intelligence)

9. The Neuron: AI Explained — "How to Be "Agent Native" in 2026 w/ Every CEO Dan Shipper"

Runtime: 98 min | Guests: Dan Shipper (CEO, Every), Corey Noles (Host, The Neuron), Grant Harvey (Host, The Neuron)

Listen if: You're building new software or adapting existing systems for an AI-first future.

Dan Shipper shares Every's approach to "agent-native" software development, where AI is the primary author and user. He discusses the concept of "compound engineering" and how designing for AI interaction leads to continuous improvement and more detailed bug reports from agents, fundamentally shifting software architecture.

"Every time there is a new model update, you have to figure out how to use your product, how to build your product and also modify your workflow to get the absolute most you can out of the model. And that's the way that you take advantage of model progress."

— Dan Shipper, CEO of Every

Connects to: The Rundown (agent-native architecture), The Signals (Every, Dan Shipper)

10. Last Week in AI — "#238 - GPT 5.4 mini, OpenAI Pivot, Mamba 3, Attention Residuals"

Runtime: 121 min | Guests: Andrey Kurenkov (Host, Astrocade), Jeremie Harris (Host, Gladstone.AI)

Listen if: You want to catch up on the latest model releases and the competitive landscape of AI ecosystems.

Andrey Kurenkov and Jeremie Harris analyze OpenAI's GPT-5.4 mini/nano models, Mistral's Small 4, and the burgeoning competition in agent "operating systems." They discuss the strategic pivot of OpenAI towards enterprise services and the implications of new GPU technologies, highlighting the increasing demand for model quality over just cost efficiency.

"Instead of racing to the bottom on inference costs, we're going to focus on model quality and that that's going to be our big differentiator. You're going to care that we can get the right answer, not that we can get it cheaply, which is where all the margin is."

— Andrey Kurenkov, Host at Astrocade

Connects to: The Signals (GPT-5.4 mini, Mistral, OpenAI's strategic shift)

11. Decoder with Nilay Patel — "Confronting the CEO of the AI company that impersonated me"

Runtime: 76 min | Guests: Nilay Patel (Editor-in-chief and Host of Decoder, The Verge), Shishir Mehrotra (CEO, Superhuman)

Listen if: You're concerned about AI ethics, intellectual property, and establishing trust with users in the age of generative AI.

Nilay Patel confronts Superhuman CEO Shishir Mehrotra about Grammarly's use of public figures' names without permission. The discussion delves into the ethical and legal complexities of using likenesses for commercial purposes, the distinction between copyright and identity claims, and the broader negative public perception of AI due to job displacement fears.

"If you use my likeness, how much should you have to pay me? We should not be able to impersonate you, period."

— Nilay Patel, Editor-in-chief of The Verge and Host of Decoder

Connects to: The Signals (Grammarly, Name and Likeness Claims, AI regulation)

12. "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis — "Scaling Intelligence Out: Cisco's Vision for the Internet of Cognition, with Vijoy Pandey"

Runtime: 96 min | Guests: Vijoy Pandey (SVP and GM, Outshift by Cisco), Nathan Labenz (Host, The Cognitive Revolution)

Listen if: You're evaluating long-term AI strategy beyond single-model deployments and into distributed, collaborative AI systems.

Nathan Labenz and Vijoy Pandey discuss Cisco's "Internet of Cognition" vision, which emphasizes horizontal scaling of AI through distributed agent collaboration. This shifts focus from vertical model improvement to creating a framework for agents to share context, build trust, and solve problems in shared spaces, mirroring the evolution of human society through language.

"What we're calling the Internet of Cognition because it is going to be distributed across a bunch of agents who by definition will come from different vendors because they're all subject matter experts, they will not come from the same vendor and they will all need to come together, collaborate and solve for this net new problem space."

— Vijoy Pandey, VP of Engineering at Cisco

Connects to: The Big Shift (Internet of Cognition), The Signals (Cisco, Vijoy Pandey, Internet of Cognition)