The AI conversation has moved from "can it do it?" to "what are the rules if it does?"

The Intake

📊 12 episodes across 12 podcasts

⏱ 624 minutes of intelligence analyzed

🎙 Featuring: Danny Wu (Canva), Corey Knowles (The Neuron: AI Explained), Grant Harvey (The Neuron: AI Explained), Danny V M (Canva)

The Big Shift

AI's Coming of Age: From Efficiency Tool to Ethical Battleground

The AI narrative is rapidly maturing, shifting from a focus on raw capabilities and efficiency gains to a confrontational engagement with ethical dilemmas, governance, and societal impact. We're past the "can AI write an essay?" phase; the conversation is now about AI agents dynamically designing other agents, autonomous systems making real-world decisions, and militaries integrating AI into battle planning. This isn't just about responsible deployment; it's about defining the boundaries of AI's independence and challenging power structures that seek to co-opt it for mass surveillance or warfare. The days of tech companies quietly removing ethical clauses around military use, as observed by Kevin Roose of The New York Times on Hard Fork, are increasingly met with resistance, exemplified by Anthropic's public standoff with the Pentagon. This marks a pivotal moment where AI is no longer a benign tool to optimize workflows, but a force demanding ethical frameworks that reflect its growing power and widespread influence across all sectors.

"I think it shows where the AI ecosystem is probably going to be heading next. I think a lot of AI agents are becoming more capable. They're starting to interact not just with humans, but with each other."

— Jaden Schaefer, Host of AI Breakdown

Why it matters: This shift mandates that leaders move beyond just contemplating AI strategy to actively shaping organizational AI ethics and governance frameworks. The implications touch everything from IP and data integrity to military applications and workforce mental health.

The move: Prioritize establishing clear ethical guidelines for AI use within your organization, focusing on data sourcing, attribution, and the level of human oversight required for agentic systems. Engage with legal and privacy experts to navigate emerging challenges like the "third-party doctrine."

The Rundown

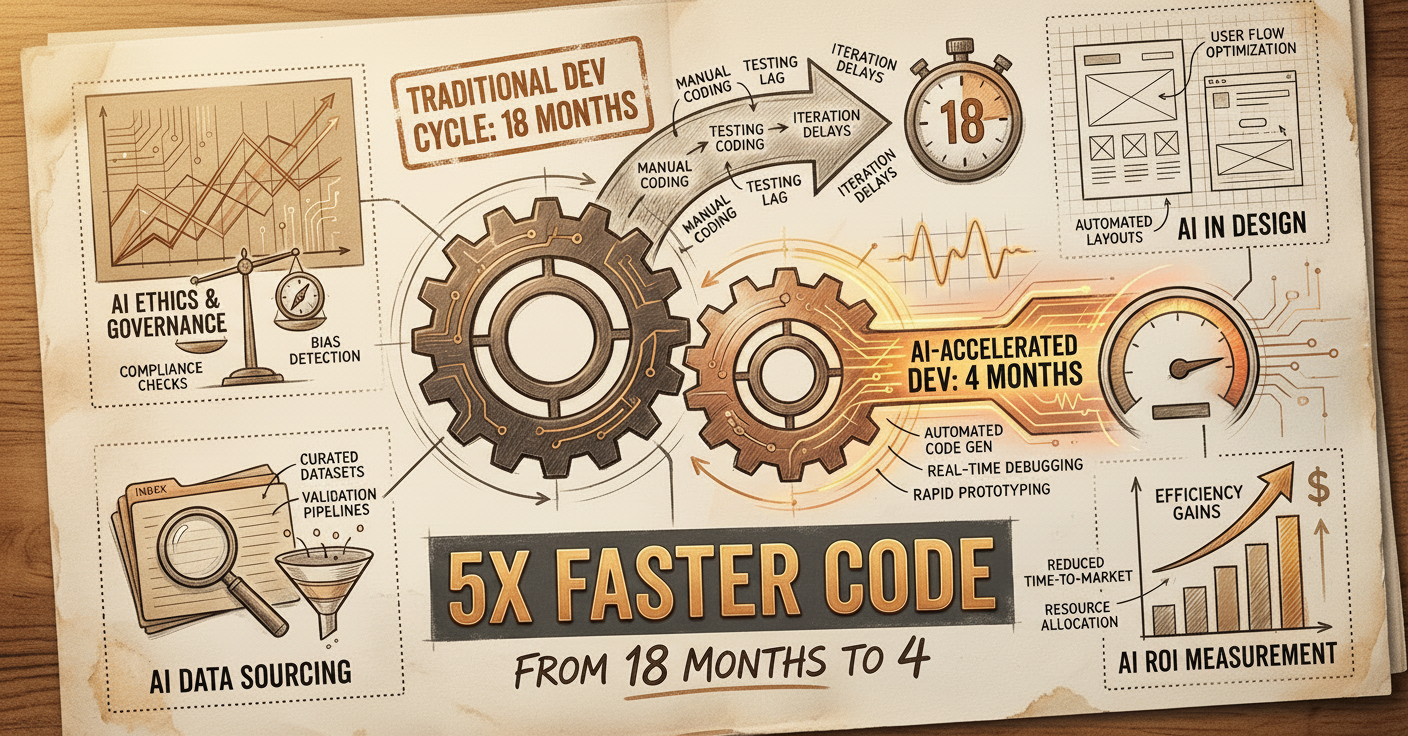

① AI-driven autonomous software development is outpacing traditional methods by 5x.

Blitzy Co-founder and CTO Siddhant Pardeshi on The TWIML AI Podcast (formerly This Week in Machine Learning & Artificial Intelligence) explained that code is a commodity, and the real challenge for AI is "code acceptance" (security, standards, maintainability), which their agent swarms and knowledge graphs hyper-scale to deliver, reducing 18 months of work to 3-4 months.

→ Why it matters: If you're still seeing software development as a purely human-coded pipeline, you're missing a massive acceleration opportunity. The focus needs to shift from writing code to defining acceptance criteria and managing agentic workflows for quality and compliance.

② Canva's AI is designing editable, layered outputs, not just flat images.

Canva's Head of AI Products, Danny Wu, on The Neuron: AI Explained, highlighted their "Creative Operating System" approach, where AI generates underlying editable assets and elements, vastly expanding the definition of design and offering deep personalization (Danny Wu noted that "[w]hen you start treating AI as individual tools like paintbrushes, you can use your toolkit and try to build your own repeatable and scalable and high velocity workflows").

→ What to watch: This indicates a major leap beyond generative AI for static media. Design software will soon be less about creating from scratch and more about directing AI to produce fully editable, brand-compliant assets, demanding new skill sets for designers and marketers.

③ Measuring the ROI of your data team through internal sentiment, not just dashboards.

Barry McCardel, Co-founder and CEO of Hex, discussed on The AI in Business Podcast that dashboards often raise more questions than they answer, leading to "tool creep." He argued that the true ROI of a data team is best reflected in internal Net Promoter Score from other departments, indicating effective service and collaboration, rather than quantitative usage logs.

→ The context: This is a crucial reframe for leaders struggling to quantify the value of their data investments. Prioritize fostering internal partnerships and responsiveness from your data teams; a quantifiable "NPS" for data services might be more telling than dashboard clicks.

④ The true frontier for LLMs is in specialized tasks, not just internet-scale data.

Andrey Kurenkov on Last Week in AI suggested that while pre-training on internet-scale data is useful, the next leap for AI will come from models excelling in "real tasks" where that broad pre-training isn't as critical. This implies a move towards highly specialized, domain-specific agents.

→ What to watch: Generic LLMs are reaching their limits for certain enterprise applications. Look for AI solutions that are purpose-built and trained for specific business functions, as they will likely offer superior performance and less "hallucination."

⑤ Human judgment remains critical for pushing AI capabilities beyond the obvious.

Phelim Bradley, Co-founder and CEO of Prolific, explained on Eye On A.I. that "the human judgment is where the alpha is" when it comes to AI. If you want to develop AI that outperforms existing models, you need human evaluators to identify gaps and offer novel insights that automated systems can't yet replicate.

→ Why it matters: Don't fall into the trap of full automation for AI evaluation. Human-in-the-loop systems are essential for innovation, safety, and cultural relevance. Investing in platforms that connect researchers with diverse human evaluators is key to gaining an edge.

⑥ The lack of ethically sourced, diverse datasets is the core problem in AI bias.

Sony AI'sAlice Xiang on Me, Myself, and AI, discussed how the pervasive issue isn't just measuring bias, but the fundamental absence of quality, representative, and ethically obtained data for evaluating AI models. She introduced PHOEBE (Fair Human-centric Image Benchmark) as a benchmark sourced with consent and compensation, countering "data nihilism."

→ The context: Focusing solely on technical bias mitigation without addressing the upstream data sourcing problem is putting a band-aid on a bullet wound. Companies need to invest in "clean" data acquisition processes, treating ethical data as a foundational requirement, not an afterthought.

⑦ Biosecurity data controls are critical, but only for "functional data" of pandemic potential.

Jassi Pannu, Assistant Professor at Johns Hopkins, on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis argued for data controls only on "functional data" for viruses with pandemic potential (transmissibility, immune evasion), while allowing open access to sequence data. This protects against misuse without stifling fundamental research, aligning with existing US Government wet lab regulations.

→ Why it matters: This nuanced approach to data governance is a blueprint for other high-stakes domains. Rather than broad, restrictive bans, targeted controls on "functional" or risk-enhancing data can allow for innovation while mitigating true existential threats.

The Signals

👍 Heating Up

• Agent swarm and knowledge graph architectures: Enabling hyperscaled autonomous software development, accelerating project timelines significantly. (Siddhant Pardeshi on The TWIML AI Podcast (formerly This Week in Machine Learning & Artificial Intelligence))

• Anthropic's Public Resistance to Military Surveillance: A new ethical stance by a major AI player against government overreach in surveillance, signaling a potential shift in tech-government relations. (Mike Masnick on Decoder with Nilay Patel)

• AI-as-a-Service for User Acquisition: Canva leverages free AI tools on platforms like ChatGPT as a new "SEO" to drive user acquisition without product cannibalization. (Danny V M on The Neuron: AI Explained)

• Meta's Acquisition of Moltbook: Signals a strategic move into understanding and orchestrating agent-to-agent communication, predicting a future of intertwined AI entities. (Jaden Schaefer on AI Breakdown)

🆕 On Watch

• AI brain fry 🆕: A newly identified cognitive strain from excessive AI use and oversight, impacting productivity and mental well-being in the workplace. (Julie Bedard on Hard Fork)

• AI in military applications in Iran 🆕: Highlights a dramatic escalation in geopolitical conflict where AI infrastructure and capabilities are now strategic targets. (Kevin Roose on Hard Fork)

• Ethically sourced data 🆕 (for AI models/benchmarks): The fundamental need for ethically sourced, diverse data to combat AI bias, emphasized by Sony AI'sPHOEBE benchmark. (Alice Xiang on Me, Myself, and AI)

• Biosecurity Data Level framework 🆕: A proposed tiered system for controlling biological functional data to mitigate bioweapon risks while fostering defensive research. (Jassi Pannu on "The Cognitive Revolution" | AI Builders, Researchers, and Live Player Analysis)

📉 Cooling Off

• Traditional Academic AI Benchmarks: Their usefulness is decreasing as models are optimized to ace them, making them less reliable for real-world evaluation. (Phelim Bradley on Eye On A.I.)

• Chief AI Officer (CAIO) role: Criticized for its "toy mentality" approach to AI, often failing to integrate AI strategically and leading to high project failure rates. (Daniel Faggella on The AI in Business Podcast)

The Debate

Is the controversy around Anthropic's Claude Code Review pricing about cost or existential anxiety?

The controversy surrounding Anthropic's new AI code review feature (Claude Code Review), priced at $15-$25 per pull request, sparked widespread debate. While many expressed shock at the direct cost, a deeper divergence emerged: is this about price or a harbinger of a more fundamental shift?

🐂 The bull case: It's about a new pricing model for specialized AI labor.

Dan Adler, Source Graph CEO, argued that "Tens or even hundreds of millions of dollars in engineering organizations that cost billions in salaries seems reasonable." This perspective views the pricing as justified by the value delivered, aligning AI tool costs with the labor they either augment or replace. It's a redefinition of value for specialized AI services that aims to capture a share of the productivity gains.

🐻 The bear case: It's about developer existential dread and the end of traditional workflows.

On The AI Daily Brief: Artificial Intelligence News and Analysis, Nathaniel Whittemore highlights Boris Taine's view that "[c]linging to the PR workflow in an agent driven world isn't rigor, it's an identity crisis." This suggests the pricing shock masks a deeper anxiety among developers about the obsolescence of traditional workflows, like manual code review, in an agent-driven world where pricing reflects AI's ability to take over entire processes, not just assist.

Our read: The pricing is a provocative move designed to force a re-evaluation of value—not just cost. It signals that foundational AI models are ready to absorb specialized tasks, and the market is still grappling with how to price and integrate this new form of "digital labor."

The Bottom Line

AI is growing up fast, pushing autonomy and confronting us with profound ethical choices that demand proactive governance, not just technological adoption.

📖 Want the full episode breakdowns, guest details, and listen links?

Episode Guide

1. No Priors: Artificial Intelligence | Technology | Startups — "From Coder to Manager: Navigating the Shift to Agentic Engineering with Notion Co-Founder Simon Last"

Runtime: 29 min | Guests: Simon Last (Co-founder, Notion), Sarah Guo (Host, No Priors)

Who should listen: Founders and engineering leaders looking to deeply understand agentic workflows, and anyone managing a remote team looking for workflow insights.

Notion's co-founder explains his personal shift from coder to "agent manager," detailing how AI agents now handle coding and verification. The conversation highlights Notion's strategy to become a "Switzerland for models" by integrating various AI models and building agent-friendly APIs.

"I haven't written code since last summer... we talk to the agent and it does little tasks for us." — Simon Last, Co-founder at Notion

2. The Neuron: AI Explained — "24 Billion AI Uses Later: What Canva Learned About the Future of Design"

Runtime: 55 min | Guests: Danny Wu (Head of AI Products, Canva), Corey Knowles (Host, The Neuron), Grant Harvey (Interviewer, The Neuron), Danny V M (Head of AI, Canva)

Who should listen: Product leaders and marketers interested in how AI is redefining creative workflows and user acquisition strategies.

Canva's AI has moved beyond flat images to generate editable, layered designs, transforming the design process. They leverage free AI tools as a new acquisition channel and use advanced AI internally for complex coding, demonstrating a commitment to pushing design boundaries.

"You shouldn't be afraid to cannibalize your own product." — Danny V M, Head of AI at Canva

3. The AI in Business Podcast — "Building a Virtuous Cycle of Analytics in Global Enterprises - with Barry McCardel of Hex"

Runtime: 39 min | Guests: Daniel Faggella (Host, Emerj Artificial Intelligence Research), Barry McCardel (Co-founder and CEO, Hex)

Who should listen: Data leaders and CEOs grappling with data team ROI and effective AI adoption strategies for large enterprises.

Barry McCardel challenges the reliance on dashboards for data insights, arguing that a data team's true value is measured by internal sentiment and collaboration. He advocates for a "crawl, walk, run" approach to AI implementation, criticizing the "toy mentality" often seen in leadership.

"The problem is always like, dashboards raise more questions than answers." — Barry McCardel, Co-founder and CEO of Hex

4. The AI Daily Brief: Artificial Intelligence News and Analysis — "The Debate Over Anthropic’s New Product: Price or Existential Dread?"

Runtime: 26 min | Guests: Nathaniel Whittemore (Host, The AI Daily Brief: Artificial Intelligence News and Analysis)

Who should listen: Developers, product managers, and leaders trying to understand the deeper implications of AI pricing models and the future of work.

Nathaniel Whittemore delves into the controversy sparked by Anthropic's Claude Code Review's pricing ($15-$25 per pull request). The discussion explores whether the backlash is merely about cost or a deeper "existential anxiety" among developers as AI displaces traditional human workflows and disrupts entire industries.

"15 to 25 USD per review my lord." — Nick Schrock, Dagster Labs

5. The TWIML AI Podcast (formerly This Week in Machine Learning & Artificial Intelligence) — "Agent Swarms and Knowledge Graphs for Autonomous Software Development with Siddhant Pardeshi - #763"

Runtime: 76 min | Guests: Siddhant Pardeshi (Co-founder and CTO, Blitzy), Sam Charrington (Host, The TWIML AI Podcast)

Who should listen: Software engineering leaders and AI architects interested in the next generation of autonomous development and multi-agent systems.

Siddhant Pardeshi reveals how Blitzy enables autonomous software development by treating "code as a commodity" and focusing on "code acceptance." He details their use of hybrid graph-plus-vector for codebase navigation and dynamic agent personas to hyperscale complex changes, making agents design other agents.

"code is a commodity... Getting AI to write code is very easy... Getting code that follows your standards... that is secure... is a completely different story." — Siddhant Pardeshi, Co-founder and CTO of Blitzy

6. Me, Myself, and AI — "An Industry Benchmark for Data Fairness: Sony’s Alice Xiang"

Runtime: 34 min | Guests: Sam Ransbotham (Host, MIT Sloan Management Review), Alice Xiang (Global Head of AI Governance and Lead Research Scientist for AI Ethics at Sony AI, Sony), Shayan (Guest, Thoughtworks)

Who should listen: AI ethicists, data scientists, and governance officers committed to building fair and responsible AI systems.

Sony AI's Alice Xiang discusses the critical shift from theoretical AI ethics to practical governance. She introduces PHOEBE, an ethically-sourced benchmark, arguing that ethically sourced data is a fundamental barrier to responsible AI, not just a technical challenge.

"The biggest initial barrier folks have is even just being able to evaluate for bias..." — Alice Xiang, Global Head of AI Governance and Lead Research Scientist for AI Ethics at Sony AI